June 10, 2026

GlassFish 8.0.3 Released: Performance optimizations and security fixes

by Ondro Mihályi at June 10, 2026 04:27 PM

The latest version of Eclipse GlassFish 8.0.3 was released on June 7, 2026. This update focuses heavily on security improvements across various components and introduces significant performance optimizations for both Jakarta Faces rendering and Embedded GlassFish startup times. The release of GlassFish 8.0.3 further reenforces that GlassFish is an actively maintained, enterprise-grade production platform.

Security fixes and brute force prevention

For production deployments, security is very important. GlassFish 8.0.3 brings several critical security fixes and a new mechanism to prevent brute force attacks on admin interfaces.

The most notable security improvement is the fix for CVE-2024-9342 (6.3 MEDIUM), which addresses a vulnerability that allowed Login Brute Force attacks against the Admin interface. The fix covers all admin interfaces: Admin Console, CLI, and HTTP. The login mechanism now progressively delays responses to failed login attempts for the same caller’s IP address, making brute-force password guesses impractical and time-consuming. It includes anti-DDoS measures to prevent attackers from locking out the admin interfaces. It also supports cluster and proxy setups to correctly and safely identify the original caller. And it always lets local connections through so that local administration (from localhost or via an SSH tunnel) is never delayed.

In addition to this, GlassFish 8.0.3 includes fixes for several other CVEs in Grizzly and JAXB Impl that have not yet been officially published. These address different areas of the server, including an HTTP smuggling type of attack and a

[…]147th airhacks tv: Local LLMs, LightMetal, ZSmith Agents, AI Rails, Saving Tokens

June 10, 2026 03:11 AM

"Reflection and annotations: less needed at runtime as infrastructure becomes less dynamic, but annotations still valuable for type-safe metadata that LLMs can consume, AI token maxing and code quality: degraded LLM performance on chaotic codebases versus well-structured J2EE applications, building a career in Java with focus on continuous improvement and transferable fundamentals across languages, LightMetal: a thin Java 25 wrapper around llama.cpp using FFM bindings to run models locally with zero external dependencies and GPU-based inference, the ZSmith agent: an agentic stack with local models, BCE architecture, virtual-thread sub-agents, and a sandboxed file system implemented as Java tools, agent sandboxing via tiny Java tools with allow/deny/confirm permissions, AI Rails refactoring: extracting shared Java conventions into a reusable skill consumed by Java CLI, MicroProfile server, and BCE skills, standards and inference cost: Jakarta EE and MicroProfile are version-stable and well-known to LLMs, reducing token usage by an estimated 30-80 percent, API/SPI separation prevents hallucinations during code generation, a live demo generating a MicroProfile/Jakarta EE business component with Cloud Code, system tests, and a continuous testing loop, web frontends with web components and CSS instead of Angular or React, revisiting episode 47 from eight years prior: SSH database authentication, JSF, WAR modularization, identity preservation, JDBC revival, long-running transactions, and parallels to AI agents, workflow engines and agents: XState and AWS Step Functions specs as lightweight state-machine approaches"

June 09, 2026

lightmetal: GPU LLM Inference From a Single Java 25 JAR

June 09, 2026 06:53 AM

GPU LLM inference on Apple Silicon, packaged as one Java 25 executable JAR, zero dependencies. lightmetal binds a Metal-enabled libllama.dylib through the Foreign Function & Memory API and runs Mistral- and Gemma-architecture GGUF models locally.

Build it with zb, point it at a GGUF, prompt it:

zb build

java --enable-native-access=ALL-UNNAMED -jar zbo/lightmetal.jar \

-model ~/models/Mistral-Medium-3.5-128B-UD-Q5_K_XL-00001-of-00003.gguf \

-prompt "What is Java?"

Add -serve and the same JAR exposes an Anthropic-compatible POST /v1/messages and an OpenAI-compatible POST /v1/chat/completions.

xisting clients (zsmith, vibe) only need a base URL switch — the loaded GGUF wins, the model field is accepted and ignored.

Embedding into another Java app needs no compile-time dependency. lightmetal.jar registers a BinaryOperator via META-INF/services:

var generator = ServiceLoader.load(BinaryOperator.class).iterator().next();

var response = generator.apply("/path/to/model.gguf", "What is Java?");

Just Java 25, llama.cpp, FFM, Metal — and a GGUF on disk.

June 07, 2026

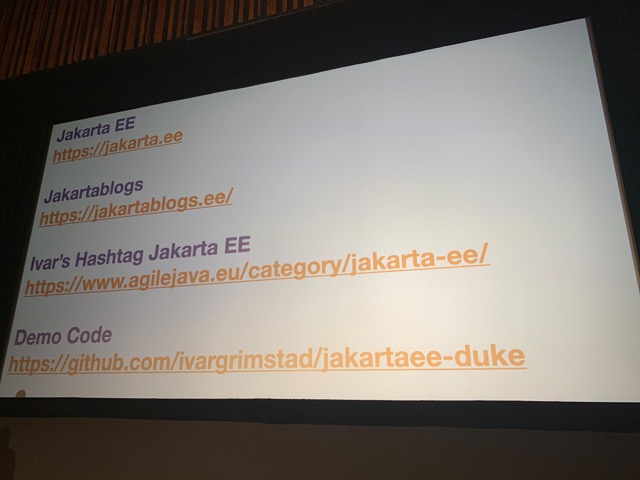

Hashtag Jakarta EE #336

by Ivar Grimstad at June 07, 2026 09:59 AM

Welcome to issue number three hundred and thirty-sixth of Hashtag Jakarta EE!

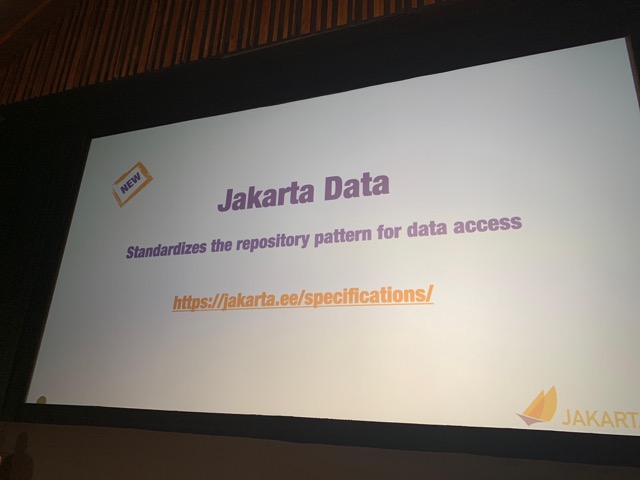

At the Jakarta EE Platform call this week, Otavio gave a presentation about Jakarta NoSQL and why it matters for developers today, particularly in the context of Artificial Intelligence. Retrieval Augmented Generation (RAG) is a technique to give the AI model more context by providing domain specific data and a vector databases is the most efficient way of providing this information. Jakarta NoSQL will provide access to NoSQL data stores, including vector databases in a standardised way.

I know it is a little early, but the work with JakartaOne Livestream 2026 has started a while ago. The program committee has been established and the CFP is about to start soon. Stay tuned to keep posted on when the CFP starts. The format will be the same as previous years, which means that we have around 10 talk slots available.

May 31, 2026

Hashtag Jakarta EE #335

by Ivar Grimstad at May 31, 2026 11:21 AM

Welcome to issue number three hundred and thirty-fifth of Hashtag Jakarta EE!

I am currently in Cluj for Cluj Innovation Days 2026. The main event was on Thursday and Friday and this weekend I have been attending a satelite event in a nearby village called Sâncraiu, More about the event will follow in a separate post shortly.

The Jakarta EE Platform call this week was a fairly short and efficient one. We are pretty much on track according to the plan of releasing Core Profile later this year with Web Profile and Platform following shortly thereafter. Nightly snapshots of the Jakarta EE API is now published to Maven Central.

<dependency>

<groupId>jakarta.platform</groupId>

<artifactId>jakarta.jakartaee-api</artifactId>

<version>12.0.0-SNAPSHOT</version>

<scope>provided</scope>

</dependency>

June will be a much calmer month for me when it comes to conferences. I have only one event planned for the end of June. This will be a hackathon at the University of Cagliari where we will have a Jakarta EE challenge. Check out Code the Continuum for details.

May 25, 2026

Sane API error handling with RFC 9457 Problem Details in Jakarta EE

by Rustam Mehmandarov at May 25, 2026 08:00 AM

When APIs end up with their own error format, it quickly gets annoying for anyone who has to consume more than one API. RFC 9457 defines a standard envelope for HTTP API errors. Let’s have a look at how to do it in Jakarta EE: a small hand-made ProblemDetail plus one ExceptionMapper per error category; with the Zalando Problem library; followed by quick notes on Quarkus and Spring as alternatives.

Introduction

If you’ve consumed more than one or two REST APIs, you’ve seen the pattern. One service returns {"error": "..."}, another {"message": "...", "code": 42}, a third returns 200 OK with an error hidden somewhere deep in the response. Your REST client code fills up with special cases for each one. Sounds familiar?

RFC 9457 – Problem Details for HTTP APIs (the successor to RFC 7807) defines a single JSON envelope for errors, served as application/problem+json MIME type. It is a small spec: five well-defined bits of information and an extensions map for anything else you might need.

{

"type": "urn:problem-type:validation-error",

"title": "Validation Failed",

"status": 400,

"detail": "The request body or parameters failed validation.",

"extensions": {

"violations": [

{ "field": "title", "message": "Title is required" }

]

}

}

TL;DR: Why RFC 9457?

Why not keep creating your own?

- Consumers already know the shape. Generated SDKs, gateways, log pipelines, and tracing tools can parse

application/problem+jsonwithout extra work. - You can extend it without breaking clients. The

extensionsmap is part of the spec – put what you need in there. - It separates the category from the instance.

typesays “this is a validation error” (stable, machine-readable);detailandinstancedescribe what happened this time.

💡 Note: RFC 9457 is just a JSON structure and a content type. No library or framework is required. That’s why there are so many implementations – and why a hand-made one is often a reasonable choice.

Let’s write some code!

I have created a repository called API Guide for Java to showcase the patterns for one of my talks. For this post, have a look at ProblemDetail.java and the mappers next to it under com/mehmandarov/confapi/error/.

1. Hand-made ProblemDetail + ExceptionMapper

What it looks like

Imagine you have a REST interface looking like this:

@GET

@Path("/{id}")

@Operation(summary = "Get room by ID")

@APIResponse(responseCode = "200", description = "Room found")

@APIResponse(responseCode = "404", description = "Room not found")

public Room getById(

@Parameter(description = "Room ID", required = true)

@PathParam("id") String id) {

return repo.findById(id)

.orElseThrow(() -> new NotFoundException("Room not found: " + id));

}

Now, you can add a single ProblemDetail class – built around the five RFC 9457 elements and an extensions map – and one ExceptionMapper per error category.

public class ProblemDetail {

private URI type = URI.create("about:blank");

private String title;

private int status;

private String detail;

private URI instance;

private final Map<String, Object> extensions = new LinkedHashMap<>();

public static ProblemDetail of(int status, String title) { /* ... */ }

public ProblemDetail withType(String typeUri) { /* ... */ }

public ProblemDetail withExtension(String key, Object v) { /* ... */ }

// + getters/setters

}

The interesting part is how it gets used. As you can see from the resource code above, there is no try/catch in resources, ever – every exception is turned into a Problem Details response by an ExceptionMapper:

@Provider

public class ConstraintViolationExceptionMapper

implements ExceptionMapper<ConstraintViolationException> {

@Override

public Response toResponse(ConstraintViolationException ex) {

List<Map<String, String>> violations = ex.getConstraintViolations()

.stream().map(this::toMap).toList();

ProblemDetail problem = ProblemDetail.of(400, "Validation Failed")

.withType("urn:problem-type:validation-error")

.withExtension("violations", violations);

return Response.status(400)

.type("application/problem+json")

.entity(problem).build();

}

}

One mapper per category keeps each file small and obvious: ConstraintViolationExceptionMapper → 400, NotFoundExceptionMapper → 404, NotAuthorizedExceptionMapper → 401, ForbiddenExceptionMapper → 403, and a CatchAllExceptionMapper → 500 that never leaks stack traces to clients.

⚠️A word of caution: The catch-all mapper is the safety net for everything you forgot to handle. Without one, an uncaught exception ends up in the server’s default error page, which often includes stack traces, server versions, and sometimes filesystem paths. However, it might be a good idea to handle most of the common exceptions explicitly, and leave the generic catch-all for something truly unexpected.

✅ Pros:

- Portable across runtimes. The same code runs on Quarkus, Helidon, and Open Liberty. No runtime-specific extension.

- No extra dependencies. RFC 9457 is just a JSON structure; you don’t need a library to emit one.

- Small, readable surface. The error model fits on one slide. When something goes wrong, you can read the source.

❌ Cons:

- You write the boilerplate yourself – one mapper per category.

- Nothing maps validation,

WebApplicationException, or uncaughtThrowableautomatically – you wire each one up. (This can also be one of the pros, depending on the way you look at things.) - No content negotiation between

application/jsonandapplication/problem+jsonunless you add it yourself. (Spring, for example, has a built-inProblemDetailthat does this for you.)

💡 Want to know more? The full code, including all five mappers, lives in com/mehmandarov/confapi/error/.

2. Zalando Problem

What it looks like

The Zalando Problem library (org.zalando:problem + jackson-datatype-problem) gives you Problem and ThrowableProblem types and Jackson serialization. You still write an ExceptionMapper to bridge JAX-RS exceptions to Problem, but you don’t define the envelope yourself.

import org.zalando.problem.Problem;

import org.zalando.problem.Status;

Problem problem = Problem.builder()

.withType(URI.create("urn:problem-type:validation-error"))

.withTitle("Validation Failed")

.withStatus(Status.BAD_REQUEST)

.with("violations", violations)

.build();

return Response.status(400)

.type("application/problem+json")

.entity(problem).build();

✅ Pros:

- Cross-runtime. Works on Quarkus, Helidon, and Open Liberty – the same artifact deploys on all three.

- Used in production at Zalando (and elsewhere); the model handles

causechains, stack-trace processing, and a few edge cases you probably would not have thought of upfront. - Jackson integration is done for you via

jackson-datatype-problem.

❌ Cons:

- One more dependency to track and upgrade.

- You still write the

ExceptionMappers – the library standardises the payload, not the wiring. - If your stack is JSON-B rather than Jackson, you have a bit of extra work.

3. Quarkus: quarkus-http-problem

If you’re only targeting Quarkus, the quarkus-http-problem Quarkiverse extension is the shortest path. It auto-maps ConstraintViolationException, WebApplicationException, and uncaught Throwable to application/problem+json with no boilerplate from you.

✅ Pros:

- Add the dependency and you get Problem Details for exceptions. No need to write a mapper for each of them.

- Reasonable defaults for validation and security exceptions.

❌ Cons:

- Quarkus only. Doesn’t help on Helidon (Jersey) or Open Liberty (CXF). If “runs on every Jakarta runtime” is a requirement, this is out.

- Less visibility into what gets mapped to what – fine until you need to override a default.

4. Spring Boot – a short note

For completeness, we need to mention Spring Boot 3+ as well, which has Problem Details built in as org.springframework.http.ProblemDetail, with content negotiation and @ExceptionHandler integration already wired up. If you’re on Spring, just use it. The JSON structure is the same RFC 9457; only the wiring differs.

Conclusion

The point of RFC 9457 is not that there’s one correct implementation – there are several reasonable ones – but that there’s one correct envelope. Once your API speaks application/problem+json, clients stop hand-coding error parsers for each new service they consume.

A few rules of thumb:

- On Spring, use the built-in

ProblemDetail. - On Quarkus only, reach for

quarkus-http-problemand move on. - For cross-runtime Jakarta, choose between Zalando Problem (one dependency, more handled for you) and the hand-made approach (no dependencies, about 30 lines you fully understand).

I picked the hand-made approach for the demo project because portability across Quarkus, Helidon, and Open Liberty mattered, and because the ExceptionMapper is the demo – hiding it behind a library would have defeated the point of the talk.

However, “hand-made” doesn’t have to mean “everyone reinvents it from scratch”. Write it once, put it in a small internal library, and reuse it across services. That’s still less code than wiring up a third-party dependency in each runtime.

Summary Comparison

| Option | What it gives you | Runtimes | Dependency cost |

|---|---|---|---|

| Hand-made (this post) | ~30-line ProblemDetail + one mapper per error category. |

✅ Quarkus ✅ Helidon ✅ Open Liberty | None |

| Zalando Problem | Problem / ThrowableProblem types + Jackson serialization. You still write the mappers. |

✅ Quarkus ✅ Helidon ✅ Open Liberty | 1–2 artifacts |

quarkus-http-problem |

Auto-maps validation, WebApplicationException, and uncaught Throwable. No boilerplate. |

✅ Quarkus only | 1 extension |

Spring ProblemDetail |

Built into the framework. Content negotiation and @ExceptionHandler integration. |

✅ Spring Boot 3+ | None (built in) |

What’s Next?

Error handling is one of the bonus topics in the API Guide for Java. The same repo also covers OpenAPI documentation, security (RBAC, JWT), pagination, async, and versioning strategies – see my earlier post on API versioning in Java using JAX-RS.

Happy shipping of well-formed error messages, folks!

May 19, 2026

Jakarta EE 11, Spring Boot 4.0, and more in 26.0.0.5

May 19, 2026 12:00 AM

This release introduces official support for Jakarta EE 11, Spring Boot 4.0 applications, and updated TLS/SSL cipher handling in Open Liberty, including enhanced Spring Boot deployment support and simplified SSL cipher configuration.

In Open Liberty 26.0.0.5:

View the list of fixed bugs in 26.0.0.5.

Check out previous Open Liberty GA release blog posts.

Develop and run your apps using 26.0.0.5

If you’re using Maven, include the following in your pom.xml file:

<plugin>

<groupId>io.openliberty.tools</groupId>

<artifactId>liberty-maven-plugin</artifactId>

<version>3.12.0</version>

</plugin>Or for Gradle, include the following in your build.gradle file:

buildscript {

repositories {

mavenCentral()

}

dependencies {

classpath 'io.openliberty.tools:liberty-gradle-plugin:4.0.0'

}

}

apply plugin: 'liberty'Or if you’re using container images:

FROM icr.io/appcafe/open-libertyOr take a look at our Downloads page.

If you’re using IntelliJ IDEA, Visual Studio Code or Eclipse IDE, you can also take advantage of our open source Liberty developer tools to enable effective development, testing, debugging and application management all from within your IDE.

Jakarta EE 11 Core Profile, Web Profile, and Platform

Jakarta EE 11 Core Profile, Web Profile and Platform are now officially supported in Open Liberty! We’d like to start by thanking all those who provided feedback throughout our various betas.

Jakarta EE 11 marks a major milestone. It is the first Jakarta release to add a new specification to the platform since Java EE 8 in 2017 and, therefore, the first to provide a new component specification since the platform was taken over by the Eclipse Foundation. Among the many updates to existing Java specifications, it also removes all optional specifications and functions from the Platform. Liberty continues to support those optional specifications and functions when combined with Jakarta EE 11 features.

The Core Profile specification was introduced in Jakarta EE 10 to provide a profile for lightweight cloud native applications such as MicroProfile-based applications. With the introduction of Jakarta EE 11 support in this release, the MicroProfile 7.0 and 7.1 features also now work with Jakarta EE 11. You can run your MicroProfile 7 applications using either Jakarta EE 10 or Jakarta EE 11 features.

The following specifications make up the Jakarta Platform and the Core and Web profiles:

Jakarta EE Core Profile 11

| Specification | Updates | Liberty Feature Documentation |

|---|---|---|

Major update |

||

Major update |

||

Minor update |

||

Minor update |

||

Unchanged |

||

Unchanged |

||

Unchanged |

Jakarta EE Web Profile 11

| Specification | Updates | Liberty Feature Documentation |

|---|---|---|

Major Update |

See previous table |

|

New |

||

Major update |

||

Major update |

||

Major update |

||

Minor update |

||

Minor update |

||

Minor update |

||

Minor update |

||

Minor update |

||

Minor update |

||

Minor update |

||

Minor update |

||

Unchanged |

Not applicable |

|

Unchanged |

||

Unchanged |

||

Unchanged |

Not applicable (see Javadoc) |

Jakarta EE Platform 11

| Specification | Updates | Liberty Feature Documentation |

|---|---|---|

Major update |

See previous table |

|

Major update |

||

Unchanged |

||

Unchanged |

||

Unchanged |

||

Unchanged |

||

Unchanged |

||

Unchanged |

| Enterprise Beans 4.0 is unchanged, but the optional EJB 2.x function is no longer enabled when the enterpriseBeans-4.0 feature is configured with other Jakarta EE 11 features. Users who want to use EJB 2.x APIs must also add the enterpriseBeansHome-4.0 feature. |

Liberty provides convenience features for running all of the component specifications that are contained in the Jakarta EE 11 Web Profile (webProfile-11.0) and the Jakarta EE 11 Platform (jakartaee-11.0). These convenience features enable you to rapidly develop applications using all of the APIs contained in their respective specifications. For Jakarta EE 11 features in the application client, use the jakartaeeClient-11.0 Liberty feature.

To enable the Jakarta EE Platform 11 features, add the jakartaee-11.0 feature to your server.xml file:

<featureManager>

<feature>jakartaee-11.0</feature>

</featureManager>Alternatively, to enable the Jakarta EE Web Profile 11 features, add the webProfile-11.0 feature to your server.xml file:

<featureManager>

<feature>webProfile-11.0</feature>

</featureManager>Although no convenience feature exists for the Core Profile, you can enable its equivalent by adding the following features to your server.xml file:

<featureManager>

<feature>jsonb-3.0</feature>

<feature>jsonp-2.1</feature>

<feature>cdi-4.1</feature>

<feature>restfulWS-4.0</feature>

</featureManager>To run Jakarta EE 11 features on the Application Client Container, add the following entry in your client.xml file:

<featureManager>

<feature>jakartaeeClient-11.0</feature>

</featureManager>For more information reference:

Spring Boot 4.0

Open Liberty currently supports running Spring Boot 1.5.x, 2.x, and 3.x applications. With the introduction of the new springBoot-4.0 feature, users can now deploy Spring Boot 4.x applications. While Liberty has consistently supported Spring Boot applications packaged as WAR files, this enhancement extends support to both JAR and WAR formats for Spring Boot 4.x applications.

The springBoot-4.0 feature provides complete support for running a Spring Boot 4.x application on Open Liberty, as well as the ability to thin the application when building containerized applications.

To use this feature, users must be running Java 17 or later with Jakarta EE 11 features enabled. If the application uses servlets, it must be configured to use servlet-6.1. Include the following features in your server.xml file to configure the server.

<featureManager>

<feature>springBoot-4.0</feature>

<feature>servlet-6.1</feature>

</featureManager>The server.xml configuration for deploying a Spring Boot application follows the same approach used in earlier Liberty Spring Boot versions.

<springBootApplication id="spring-boot-app" location="spring-boot-app-0.1.0.jar" name="spring-boot-app" />As in earlier versions, the Spring Boot application JAR can be deployed by placing it in the /dropins/spring folder. The springBootApplication configuration in the server.xml file can be omitted when this deployment method is used.

Update to TLS/SSL Cipher support

Liberty now uses the effective cipher list from the JDK for SSL configuration. The securityLevel attribute in the SSL configuration is not used anymore. In addition, the enabledCiphers attribute in the SSL config is updated to customize the SSL ciphers in a more flexible way.

Liberty’s securityLevel based cipher categories no longer provide meaningful value. The MEDIUM and LOW categories contain no remaining ciphers.

The enabledCiphers attribute now has two mutually exclusive modes: (1) Specify a custom list of ciphers separated by spaces, or (2) Specify filter criteria to add (+) or remove (-) cipher suites from the effective JDK cipher list. If the value set in enabledCiphers contains a static entry and a +/- entry, an error is logged, and the server ignores the enabledCiphers value by returning the effective JDK cipher list.

Existing Usage: A user sets securityLevel as HIGH

<ssl id="defaultSSL" securityLevel=HIGH/>The securityLevel attribute is now ignored, so the previous <ssl> configuration is treated equivalently to the configuration shown here where there is no securityLevel attribute configured.

<ssl id="defaultSSL"/>Existing Usage: A user specifies all ciphers from the effective JDK list, excluding all TLS_RSA ciphers except for one (TLS_RSA_WITH_AES_128_GCM_SHA256)

<ssl id="defaultSSL" securityLevel="CUSTOM" enabledCiphers="TLS_ECDHE_ECDSA_WITH_AES_256_GCM_SHA384 TLS_ECDHE_ECDSA_WITH_AES_128_GCM_SHA256 TLS_ECDHE_ECDSA_WITH_CHACHA20_POLY1305_SHA256 TLS_DHE_DSS_WITH_AES_256_GCM_SHA384 TLS_DHE_DSS_WITH_AES_128_GCM_SHA256 TLS_ECDHE_ECDSA_WITH_AES_256_CBC_SHA384 TLS_ECDHE_ECDSA_WITH_AES_128_CBC_SHA256 TLS_DHE_DSS_WITH_AES_256_CBC_SHA256 TLS_DHE_DSS_WITH_AES_128_CBC_SHA256 TLS_ECDHE_ECDSA_WITH_AES_256_CBC_SHA TLS_ECDHE_ECDSA_WITH_AES_128_CBC_SHA TLS_DHE_DSS_WITH_AES_256_CBC_SHA TLS_DHE_DSS_WITH_AES_128_CBC_SHA TLS_RSA_WITH_AES_128_GCM_SHA256">Example with new syntax: Use wildcards to achieve the same logic

<ssl id="defaultSSL" enabledCiphers="-TLS_RSA* +TLS_RSA_WITH_AES_128_GCM_SHA256"/>To learn more about Transport Security, see SSL Constants Javadoc, JSSEProvider Javadoc, and SSL Configuration Reference.

Security vulnerability (CVE) fixes in this release

| CVE | CVSS Score | Vulnerability Assessment | Versions Affected | Notes |

|---|---|---|---|---|

7.5 |

Identity spoofing |

17.0.0.3-26.0.0.4 |

For a list of past security vulnerability fixes, reference the Security vulnerability (CVE) list.

Notable bugs fixed in this release

We’ve spent some time fixing bugs. The following sections describe just some of the issues resolved in this release. If you’re interested, here’s the full list of bugs fixed in 26.0.0.5.

Get Open Liberty 26.0.0.5 now

Available through Maven, Gradle, Docker, and as a downloadable archive.

May 17, 2026

GlassFish 8.0.2 Released: With important security fixes and other improvements

by Ondro Mihályi at May 17, 2026 11:11 PM

The latest version of Eclipse GlassFish 8.0.2 was released on May 5, 2026, with fixes for several critical vulnerabilities. It builds on top of a lot of subtle work and improvements in GlassFish components like the Eclipse Grizzly HTTP framework, or in related components like Eclipse OpenMQ message broker and Eclipse ORB (CORBA) for remote EJB calls. The release of GlassFish 8.0.2 serves as further evidence that GlassFish remains an actively evolving platform, backed by the dedicated maintenance and support of the OmniFish team. As the primary drivers of this effort, we’d like to share a deeper look into the technical advancements within this new version.

Security fixes

First and foremost, GlassFish 8.0.2 brings a few important security fixes, which alone should be a good-enough reason to upgrade GlassFish:

- CVE-2026-24457 (9.8 CRITICAL) – Allows to read arbitrary files from the OpenMQ Broker’s server

- CVE-2026-2587 (9.6 CRITICAL) – An authenticated Remote Code Execution (RCE) vulnerability in Admin Console

- CVE-2026-2586 (9.1 CRITICAL) – A critical Remote Code Execution (RCE) vulnerability in Admin Console

The first two of those CVEs were reported directly to the Eclipse Foundation and the Eclipse GlassFish team. OmniFish collaborated closely on these fixes and made sure they are released in GlassFish 8.0.2 and properly published to the public CVE registries.

[…]April 19, 2026

API versioning in Java using JAX-RS with Jakarta EE and MicroProfile

by Rustam Mehmandarov at April 19, 2026 07:50 AM

Creating APIs and maintaining them over time means often that we need to version them. We will be looking into several ways of doing so in Java using JAX-RS, while building our API end-points using Jakarta EE and MicroProfile. This post was inspired by my talk “API = Some REST and HTTP, right? RIGHT?!”

- Introduction

- Why Versioning?

- Show Me The CODE!

- 1. URL Versioning

- 2. Header Versioning

- 3. Media Type Versioning

- 4. Request Parameter Versioning

- 5. Bonus: Combining Strategies

- 6. End-Point Deprecation

- Summary Comparison

- Conclusion

- What’s Next?

Introduction

When working with APIs over time we would often need to make some changes to end-point definitions – like adding or deleting resources or changing the attributes for a resource. To ensure backwards compatibility, we often have to introduce versioning for our APIs. APIs, like all software, evolve. You might be adding optional fields or introducing a breaking change. At some point, you will need versioning to support coexistence of the old and new consumers.

However, versioning the API endpoint introduces a question of how this should be done. In this post, we’ll explore several common API versioning strategies, using Jakarta EE and Java.

💡 Note: There is no silver bullet – instead, we’ll explore pros, cons, and real-world fit.

Why Versioning?

Why not just change the API?

Because breaking contracts is dangerous — clients may not update in sync, and you’ll break production consumers.

Versioning allows you to:

- Support legacy clients

- Introduce new features safely

- Deprecate responsibly

⚠️ Caution: Versioning can cause “version explosion.” Each version increases long-term maintenance cost – aka technical debt.

Best Practice: Prefer backward-compatible changes (e.g., adding fields) whenever possible. To mitigate risks, it’s important to follow best practices for versioning and provide clear documentation and migration paths for users. Also, remember to deprecate old versions to minimize maintenance efforts.

Show me the CODE!

I have created a repository called Random Strings to showcase various concepts. For this blogpost, I would recommend having a look at RandomStringsAPIDemoController.java and request_examples.http. You will find all the info on building and running the code in the repo’s README.md file. Each section below will contain “How to call it” part with an example using curl or HTTP-files, and will be based on this repo.

1. URL Versioning

What it looks like

A version appears directly in the URI path. If your API is at https://example.com/api, and the current version is version 1, the URL for a resource might look like this: https://example.com/api/v1/resource:

@GET

@Path("/v2/")

@Produces(MediaType.APPLICATION_JSON)

public Response getV2() {

return Response.status(Response.Status.NOT_IMPLEMENTED)

.entity("This v2 using *path versioning* of the API is not implemented.")

.build();

}

How to call it

cURL:

curl -X GET http://localhost:8080/api/rnd/v2/ \

-H "Accept: application/json"

HTTP Request (.http file):

GET http://localhost:8080/api/rnd/v2/

Accept: application/json

✅ Pros:

- Simple and intuitive. Visible.

- Easy to test (e.g., with curl or Postman directly in a browser).

- Plays well with gateways and reverse proxies.

- Clear visual distinction between versions.

❌ Cons:

- Pollutes the URI with versioning logic.

- Breaks REST’s principle of stable resource identifiers.

- Clients have to update URLs when migrating.

- Risk of accumulating too many legacy versions.

- Can result in cluttered and difficult-to-read URLs if there are multiple versions of the API.

🔍 However: Despite its REST purism flaw, URL versioning is extremely practical and widely adopted.

2. Header Versioning

What it looks like

Client specifies version in a custom HTTP header (e.g., Accept-Version, X-API-Version, etc.):

@Path("/hi2")

@GET

@Produces({"application/json"})

public String entryPoint2(@HeaderParam("Accept-Version") String apiVersion) {

if (apiVersion == null || apiVersion.isEmpty()) {

return "Default unversioned endpoint hit.";

}

String message = "Versioned: Using custom headers. Using version: " + apiVersion +".";

return message;

}

How to call it

Note: This is for demo purposes only. It has to have a different URL than the regular API; otherwise, it will also intercept calls that do not contain the Accept-Version header.

cURL:

curl -X GET http://localhost:8080/api/rnd/versioned/ \

-H "Accept: application/json" \

-H "Accept-Version: 2"

HTTP Request (.http file):

GET http://localhost:8080/api/rnd/versioned/

Accept: application/json

Accept-Version: 2

✅ Pros:

- Keeps URL structure clean and predictable.

- Closer to HTTP semantics (headers = metadata).

- Allows centralized versioning logic in filters/interceptors.

❌ Cons:

- Not self-descriptive — clients must “know the secret handshake”.

- Poor discoverability (not visible in browser without tools).

- Breaks caching in some proxies/CDNs unless explicitly configured.

- Adds complexity to tooling and testing.

⚠️ Challenge: Header versioning can feel “invisible” and cause developer confusion if not well-documented.

3. Media Type Versioning (Content Negotiation)

What it looks like

Client specifies version via a custom media type in the Accept header. This is sometimes called Content Negotiation versioning.

Accept: application/hi.v3+json

In Jakarta EE:

@Path("/hi")

@GET

@Produces({"application/hi.v3+json", "application/hi.v4+json"})

public String entryPoint() throws URISyntaxException {

String message = "Versioned: Hai there!";

return message;

}

How to call it

You can request different versions (e.g., v3, v4, v5) by updating the media type:

cURL:

curl -X GET http://localhost:8080/api/rnd/ \

-H "Accept: application/rnd.v3+json"

HTTP Request (.http file):

GET http://localhost:8080/api/rnd/

Accept: application/rnd.v3+json

✅ Pros:

- Very REST-compliant: changes representation, not resource.

- URI remains stable.

- Supports richer format negotiation (e.g., XML, HAL, etc.).

❌ Cons:

- Requires strict control over media types.

- Not all clients/tooling handle custom media types well.

- Breaks with some reverse proxies and middleware that don’t forward full Accept headers.

- More work to configure content negotiation.

🧪 Observation: Elegant in design, but rarely used consistently in real-world public APIs.

4. Request Parameter Versioning

What it looks like

Technically, it is also possible for the client to specify the version in a URL query parameter (e.g., ?version=2). This, however, might not be a suggested strategy, in my opinion.

https://example.com/api/resource?version=2

How to call it

cURL:

curl -X GET http://localhost:8080/api/rnd?version=2 \

-H "Accept: application/json"

HTTP Request (.http file):

GET http://localhost:8080/api/rnd?version=2

Accept: application/json

✅ Pros:

- Simplicity & discoverability: Easy to test in a browser without specialized tools.

- Defaulting logic: Straightforward to implement “default to latest” if the parameter is omitted.

- Caching friendly: CDNs treat different query params as unique resources by default.

❌ Cons:

- URI Pollution: Mixes resource identification with technical metadata.

- Routing complexity: Routing based on query parameters usually requires custom middleware or manual logic inside the controller.

- Harder to generate clean, automated documentation (like OpenAPI) when multiple versions share the same path.

5. Bonus: Combining Strategies - Transparent URI Rewriting (Enterprise Pattern)

In large enterprises, you might find that different clients have different needs. Some prefer the explicitness of URL versioning, while others require the clean URIs of Header versioning. You don’t have to choose just one—you can support both without duplicating your backend routing logic.

The common practice is to structure all your resource classes using URL versioning (e.g., @Path("/v1/resource")), but use a @PreMatching Filter to intercept requests and transparently rewrite the URI if a client uses a header instead.

Here is what that looks like in Jakarta EE using a ContainerRequestFilter:

@Provider

@PreMatching

public class HeaderVersionFilter implements ContainerRequestFilter {

@Override

public void filter(ContainerRequestContext ctx) {

String path = ctx.getUriInfo().getPath();

// If the path is already versioned (e.g., starts with v1, v2), let it pass

if (path.matches("v\\d+(/.*)?")) return;

// Otherwise, check if the client provided a version header

String version = ctx.getHeaderString("X-API-Version");

if (version != null && !version.isEmpty()) {

// Transparently rewrite the URI internally to match our URL-based routes

String newPath = "v" + version + "/" + path;

URI baseUri = ctx.getUriInfo().getBaseUri();

URI newUri = UriBuilder.fromUri(baseUri).path(newPath).build();

ctx.setRequestUri(baseUri, newUri);

}

}

}

✅ Pros:

- Ultimate Flexibility: Clients can use

http://api.example.com/v2/resourceORhttp://api.example.com/resourcewith anX-API-Version: 2header. - Single Source of Truth: Your backend controllers only need to use

@Path("/v2/"). You don’t have to write duplicate methods to handle both headers and paths.

❌ Cons:

- Magic Routing: It introduces a layer of “magic” where the requested URI differs from the routed URI, which can briefly confuse new developers debugging the application.

💡 Want to know more? Read up on terms Version Normalization and Internal Decoupling.

6. End-Point Deprecation

Eventually, you will need to retire old API versions. Remember: every old version you keep around is technical debt — it increases long-term maintenance cost. When deprecating an endpoint, consider the following best practices:

- Update the Docs: Use OpenAPI’s

@Operationannotation to clearly mark it as deprecated. - Add

@Deprecated: Use the Java@Deprecatedannotation where necessary. - HTTP Redirects: Consider returning HTTP codes like

302 Foundor301 Moved Permanentlyafter some time. - Add a Link header: Provide a link to the new version in the response headers.

- Log / Count calls: Track usage (e.g., with MicroProfile

@Counted) to know when it is safe to finally remove the endpoint.

Here is a practical example in Jakarta EE showing how to deprecate an endpoint, add a Link header, and track metrics:

@GET

@Path("v0.1/")

@Produces(MediaType.APPLICATION_JSON)

@Operation(summary = "DEPRECATED. Use v2 now. Returns the adjective-noun pair",

description = "Deprecated function. The pair of one random adjective and one random noun is returned as an array.")

@Counted(name = "totalCountToRandomPairCalls_Versioned_Path_DEPRECATED",

absolute = true,

description = "Deprecated function call: Total number of calls to random string pairs.",

tags = {"calls=pairs"})

@Deprecated

public Response getRndStringPathDeprecated() {

URI newVersionURI = UriBuilder.fromUri("/api/rnd/v2/").build();

Link newVersionLink = Link.fromUri(newVersionURI).rel("alternate").build();

return Response.ok("Deprecated response", MediaType.APPLICATION_JSON)

.header(jakarta.ws.rs.core.HttpHeaders.LINK, newVersionLink.toString())

.header("X-API-Version", "0.1")

.build();

}

How to call it (Deprecated endpoint)

cURL:

curl -X GET http://localhost:8080/api/rnd/v0.1/ \

-H "Accept: application/json"

HTTP Request (.http file):

GET http://localhost:8080/api/rnd/v0.1/

Accept: application/json

Summary Comparison

The following table summarizes all the different routing strategies implemented in the demo project, illustrating how the HTTP method, path, and headers combine to invoke the correct Java method. The method names refer to the methods in RandomStringsAPIDemoController.java (or RandomStringsController.java):

| HTTP Method | Path | Headers | Method Invoked | Notes |

|---|---|---|---|---|

GET |

/rnd |

None | getRndString() |

Default (unversioned) endpoint |

GET |

/rnd |

Accept: application/json |

getRndString() |

Standard media type |

GET |

/rnd/v2/ |

Any | getRndStringV2path() |

Demo for path-based versioning |

GET |

/rnd/versioned |

None | getRndStringV2Header() |

Fallback to getRndString() if header is missing |

GET |

/rnd/versioned |

Accept-Version: 2 |

getRndStringV2Header() |

Header-based versioning |

GET |

/rnd |

Accept: application/rnd.v3+json |

getRndStringV3V4MediaType() |

Media type versioning — v3 |

GET |

/rnd |

Accept: application/rnd.v4+json |

getRndStringV3V4MediaType() |

Media type versioning — v4 |

GET |

/rnd |

Accept: application/rnd.v5+json |

getRndStringV5MediaType() |

Media type versioning — v5 |

Conclusion

There is no single correct approach to API versioning. For most teams and public APIs, URL versioning is good enough—it’s visible, easy to test, and plays well with existing tooling. However, you might want to use header versioning if your APIs are primarily consumed by internal services or SDKs that can abstract away the complexity. Reserve media type versioning for hypermedia-rich or REST-purist APIs, and only if your tooling supports it end-to-end.

Consider who your consumers are, whether your API is public or internal, your infrastructure maturity, and your team’s ability to support multiple versions.

What’s Next?

Versioning is just one part of building robust REST APIs. If you want to dive deeper, have a look at the API Guide for Java repository and the slides in the presentation folder. They cover documentation with OpenAPI, security best practices (like RBAC and JWT integration), advanced patterns (pagination, async APIs), and going beyond REST with gRPC and GraphQL.

Happy coding!

April 07, 2026

Jakarta EE 11 Platform, Java 26, and more in 26.0.0.4-beta

April 07, 2026 12:00 AM

This beta introduces Jakarta EE 11 Platform and Web Profile, and delivers enhancements across Jakarta Authentication 3.1, Jakarta Authorization 3.0, and Jakarta Security 4.0. In addition, it provides a preview of Jakarta Data 1.1 M2, support for Java 26, MCP Server updates, and Jandex index improvements.

The Open Liberty 26.0.0.4-beta includes the following beta features (along with all GA features):

See also previous Open Liberty beta blog posts.

Jakarta EE 11 Platform and Web Profile

Open Liberty 24.0.0.11-beta was one of the ratifying implementations for Jakarta EE 11 Core Profile. Building on that foundation, the 26.0.0.4-beta release completes Jakarta EE 11 beta support, including Jakarta EE Application Client 11.0, Jakarta EE Web Profile 11.0, and Jakarta EE Platform 11.0.

Liberty provides convenience features that bundle all component specifications in the Jakarta EE Web Profile and Jakarta EE Platform. The webProfile-11.0 Liberty feature includes all Jakarta EE Web Profile functions, including the new Jakarta Data 1.0 component. The jakartaee-11.0 Liberty feature provides the Jakarta EE Platform version 11 implementation. For Jakarta EE 11 features in the application client, use the jakartaeeClient-11.0 Liberty feature.

These convenience features enable developers to rapidly develop applications using all APIs in the Web Profile and Platform specifications. Unlike the jakartaee-10.0 Liberty feature, the jakartaee-11.0 Liberty feature does not enable the Managed Beans specification function any longer, as this specification was removed from the platform which includes removal of the jakarta.annotation.ManagedBean annotation API. Applications relying on the @ManagedBean annotation must migrate to use CDI annotations.

The Jakarta EE 11 Platform specification removes all optional specifications from the platform, meaning Jakarta SOAP with Attachments, XML Binding, XML Web Services, and Enterprise Beans 2.x APIs functions are not included with the jakartaee-11.0 Liberty feature. To use these capabilities, explicitly add Liberty features to your server.xml feature list: xmlBinding-4.0 for XML Binding, xmlWS-4.0 for SOAP with Attachments and XML Web Services, and enterpriseBeansHome-4.0 for Jakarta Enterprise Beans 2.x APIs. Alternatively, use the equivalent versionless features with the jakartaee-11.0 platform.

When using the Liberty application client with the jakartaeeClient-11.0 feature, Jakarta SOAP with Attachments, XML Binding, and XML Web Services client functions are not available. To continue using these functions in your Liberty application client, enable the xmlBinding-4.0 and the new xmlWSClient-4.0 features in your client.xml file.

To enable the Jakarta EE 11 beta features in your Liberty server’s server.xml:

<featureManager>

<feature>jakartaee-11.0</feature>

</featureManager>Or you can add the Web Profile convenience feature to enable all of the Jakarta EE 11 Web Profile beta features at once:

<featureManager>

<feature>webProfile-11.0</feature>

</featureManager>You can enable the Jakarta EE 11 features on the Application Client Container in the client.xml:

<featureManager>

<feature>jakartaeeClient-11.0</feature>

</featureManager>For more information, see the Jakarta EE Platform 11 Specification and the Jakarta EE Web Profile 11 Specification.

Application Authentication 3.1 (Jakarta Authentication 3.1)

Jakarta Authentication defines a general SPI for authentication mechanisms, which are controllers that interact with a caller and the container’s environment to obtain and validate the caller’s credentials. It then passes an authenticated identity (such as name and groups) to the container.

The 3.1 version of the Jakarta Authentication API removes the deprecated Permission-related fields in the jakarta.security.auth.message.config.AuthConfigFactory class. The methods in the class no longer do permission checking also. These changes are part of Jakarta EE 11’s removal of SecurityManager support.

You can enable the Jakarta Authentication 3.1 feature by adding the appAuthentication-3.1 Liberty feature in the server.xml file:

<featureManager>

<feature>appAuthentication-3.1</feature>

</featureManager>For more information, see the Jakarta Authentication 3.1 specification and javadoc.

Application Authorization 3.0 (Jakarta Authorization 3.0)

Jakarta Authorization defines an SPI for authorization modules, which are repositories of permissions that facilitate subject-based security by determining whether a subject has a specific permission. It also defines algorithms that transform security constraints for specific containers, such as Jakarta Servlet or Jakarta Enterprise Beans, into these permissions.

The 3.0 API introduces the new jakarta.security.jacc.PolicyFactory and jakarta.security.jacc.Policy classes for doing authorization decisions. These classes are added to remove the dependency on the java.security.Policy class, which is deprecated in newer versions of Java. With the new PolicyFactory API, now you can have a Policy per policy context instead of having a global policy. This design allows separate policies to be maintained for each application.

Additionally, the 3.0 specification defines a mechanism to define PolicyConfigurationFactory and PolicyFactory classes in your application by using a web.xml file. This design allows for an application to have a different configuration than the server-scoped one, but still allow for it to delegate to a server scoped factory for any other applications. Authorization modules can do this delegation by using decorator constructors for both PolicyConfigurationFactory and PolicyFactory classes.

To configure your authorization modules in your application’s web.xml file, add specification defined context parameters:

<?xml version="1.0" encoding="UTF-8"?>

<web-app xmlns="https://jakarta.ee/xml/ns/jakartaee"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="https://jakarta.ee/xml/ns/jakartaee https://jakarta.ee/xml/ns/jakartaee/web-app_6_1.xsd"

version="6.1">

<context-param>

<param-name>jakarta.security.jacc.PolicyConfigurationFactory.provider</param-name>

<param-value>com.example.MyPolicyConfigurationFactory</param-value>

</context-param>

<context-param>

<param-name>jakarta.security.jacc.PolicyFactory.provider</param-name>

<param-value>com.example.MyPolicyFactory</param-value>

</context-param>

</web-app>Due to Jakarta Authorization 3.0 no longer using the java.security.Policy class and introducing a new configuration mechanism for authorization modules, the com.ibm.wsspi.security.authorization.jacc.ProviderService Liberty API is no longer available with the appAuthorization-3.0 feature. If a Liberty user feature configures authorization modules, the OSGi service that provided a ProviderService implementation must be updated to use the PolicyConfigurationFactory and PolicyFactory set methods. These methods configure the modules in the OSGi service. Alternatively you can use a Web Application Bundle (WAB) in your user feature to specify your security modules in a web.xml file.

Finally, the 3.0 API adds a new jakarta.security.jacc.PrincipalMapper class that you can obtain from the PolicyContext class when authorization processing is done in your Policy implementation. From this class, you can obtain the roles that are associated with a specific Subject to be able to determine whether the Subject is in the required role.

You can use the PrincipalMapper class in your Policy implementation’s impliesByRole (or implies) method, as shown in the following example:

public boolean impliesByRole(Permission p, Subject subject) {

Map<String, PermissionCollection> perRolePermissions =

PolicyConfigurationFactory.get().getPolicyConfiguration(contextID).getPerRolePermissions();

PrincipalMapper principalMapper = PolicyContext.get(PolicyContext.PRINCIPAL_MAPPER);

// Check to see if the Permission is in the all authenticated users role (**)

if (!principalMapper.isAnyAuthenticatedUserRoleMapped() && !subject.getPrincipals().isEmpty()) {

PermissionCollection rolePermissions = perRolePermissions.get("**");

if (rolePermissions != null && rolePermissions.implies(p)) {

return true;

}

}

// Check to see if the roles for the Subject provided imply the permission

Set<String> mappedRoles = principalMapper.getMappedRoles(subject);

for (String mappedRole : mappedRoles) {

PermissionCollection rolePermissions = perRolePermissions.get(mappedRole);

if (rolePermissions != null && rolePermissions.implies(p)) {

return true;

}

}

return false;

}You can enable the Jakarta Authorization 3.0 feature by adding the appAuthorization-3.0 Liberty feature in the server.xml file:

<featureManager>

<feature>appAuthorization-3.0</feature>

</featureManager>For more information, see the Jakarta Authorization 3.0 specification and javadoc.

Application Security 6.0 (Jakarta Security 4.0)

An In-memory Identity Store is a developer-defined store of credential information that is used during the Open Liberty authentication and authorization work flow. It provides a quick, simple, and convenient authentication mechanism for Liberty application testing, debugging, demos, and more.

-

Multiple HTTP Authentication Mechanisms (HAMs) can now be defined within the same application. These mechanisms can be specified through built-in Jakarta annotations such as

@FormAuthenticationMechanismDefinitionor through custom implementations of theHttpAuthenticationMechanisminterface. -

A new method is added to the

SecurityContextinterface calledgetAllDeclaredCallerRoles(), which returns a list of all static (declared) application roles that the authenticated caller is in. -

References to the

IdentityStorePermissionclass are removed as it was previously deprecated.

All features are available as part of appSecurity-6.0.

All feature use cases are targeted towards Jakarta EE web application developers who want to move to a modern Jakarta version and use implementations of the Jakarta Security 4.0 specification.

In-memory identity store

Before the introduction of the new identity store specification, Jakarta Security natively supported only two types of identity stores: database and LDAP, both of which are used for credential validation. While effective for production environments, these options were considered heavyweight for testing, debugging, and demonstration scenarios.

Developers can also implement custom identity stores, but doing so requires bespoke code to collect, validate, and manage credential information. This effort can divert attention from core application development. A built-in, in-memory identity store reduces this burden for non-production use cases such as testing, debugging, and demonstrations.

Multiple HAMs

It is now possible for multiple authentication mechanisms to logically act as a single HTTP Authentication Mechanism (HAM), providing a more flexible, dynamic, and configurable approach to the authentication workflow.

Access to the HttpAuthenticationMechanism is now abstracted by an internal implementation of the new Jakarta HttpAuthenticationMechanismHandler interface. This implementation prioritizes custom‑defined HAMs first and then falls back to the built‑in (annotation‑defined) HAMs, in the following order: OpenID, custom form, form, and basic authentication mechanisms.

Developers are free to provide their own implementation of the HttpAuthenticationMechanismHandler, allowing them to define a custom prioritization strategy for selecting HAMs. They must supply such an implementation if any built‑in HAMs are defined with the optional qualifiers attribute set.

getAllDeclaredCallerRoles()

The SecurityContext interface implementation is updated to include a new method, getAllDeclaredCallerRoles(), which returns a list of the declared application roles for an authenticated caller. If the caller is not authenticated or has no declared roles, the method returns an empty list.

How to use

Enable Jakarta Security 4.0 features within the server.xml file by adding the appSecurity-6.0 feature as shown in the following example:

<server description = "Liberty server">

. . .

<featureManager>

<feature>appSecurity-6.0</feature>

</featureManager>

<webAppSecurity allowInMemoryIdentityStores="true"/>

. . .

As shown in the preceding example, when an application defines an in‑memory identity store in code, its use must also be explicitly enabled in the server.xml configuration. By default, the allowInMemoryIdentityStores attribute is set to false, which instructs the Liberty authentication workflows not to use in‑memory identity stores, even when a custom identity store handler is present. For applications that rely on multiple HAMs, the allowInMemoryIdentityStores attribute does not need to be set.

|

In-Memory Identity Store

The Jakarta Security Specification 4.0 provides details on how to specify credential information to be used during the authentication workflow through the new @InMemoryIdentityStoreDefinition annotation, as shown in the following example:

. . .

@InMemoryIdentityStoreDefinition (

priority = 10,

priorityExpression = "${80/20}",

useFor = {VALIDATE, PROVIDE_GROUPS},

useForExpression = "#{'VALIDATE'}",

value = {

@Credentials(callerName = "jasmine", password = "secret1", groups = { "caller", "user" } )

}

)All attributes for the @InMemoryIdentityStoreDefinition annotation are shown in the example. The priority, priorityExpression, useFor, and useForExpression attributes are optional and set to sensible defaults.

The @Credentials annotation maps one or more caller names to a password and optional group values. The callerName and password attributes are mandatory. If either one is omitted, a compilation error occurs.

The example demonstrates a single caller definition with credential information that uses a plain-text password. However, it is highly recommended that passwords be supplied that uses an Open Liberty–supported encoding mechanism, as illustrated in the next example.

@InMemoryIdentityStoreDefinition (

value = {

@Credentials(callerName = "jasmine", password = "{xor}LDo8LTorbg==", groups = { "caller", "user" } ),

@Credentials(callerName = "frank", groups = { "user" }, password = "{hash}ARAAA <sequence shortened> Fyyw=="),

@Credentials(callerName = "sally", groups = { "user" }, password = "{aes}ARAFIYJ <sequence shortened> WRQNA==")

}

)Encrypted and encoded passwords can be generated by using the Open Liberty securityUtility, which is included under the wlp/bin/securityUtility path. The following example demonstrates how to encode a text string by using the xor encoding mechanism.

wlp/bin/securityUtility encode --encoding=xor

Enter text: <enter text to encode>

Re-enter text:

{xor}PTA9LygMultiple HAMs

Application Specification

The Jakarta Security 4.0 specification allows multiple HTTP Authentication Mechanisms (HAMs) to be defined within a single application, as shown in the following example:

@BasicAuthenticationMechanismDefinition(realmName="basicAuth")

@FormAuthenticationMechanismDefinition(

loginToContinue = @LoginToContinue(errorPage = "/form-login-error.html",

loginPage = "/form-login.html"))

@CustomFormAuthenticationMechanismDefinition(

loginToContinue = @LoginToContinue(errorPage = "/custom-login-error.html",

loginPage = "/custom-login.html"))This example demonstrates how three HTTP Authentication Mechanisms (HAMs) can be defined within a single application.

Custom HAMs can also be defined in the same application by implementing the HttpAuthenticationMechanism interface in one or more classes, as shown in the following example:

@ApplicationScoped

// @Priority is optional and used to control selection priority if multiple custom definitions exist

@Priority(100)

public class CustomHAM implements HttpAuthenticationMechanism {

@Override

public AuthenticationStatus validateRequest(

HttpServletRequest request,

HttpServletResponse response,

HttpMessageContext httpMessageContext) throws AuthenticationException {

// implement custom logic here, and return an AuthenticationStatus

return AuthenticationStatus.NOT_DONE;

}

}So a single application can have a mix of both annotation-defined HAMs and custom ones. In the previous two snippets of code, a total of four HAMs are defined (three by annotation and one custom one).

@Priority must be used to raise or lower the priority of one custom HAM over another. If not specified, then a default priority is assigned. If more than one custom HAM is defined, their priorities need to be explicitly set to unique values. If the priorities are set to the same value or remain unset and inherit the same default value, an error occurs.

|

HAM resolution

An internal implementation of the Jakarta Security 4.0 HttpAuthenticationMechanismHandler interface (the "internal HAM handler") is provided. When an application defines multiple HAMs, this internal handler selects a single HAM to be used in the authentication flow.

The order in which HAMs are considered (when present) is as follows:

-

Custom (developer‑provided) HAMs

-

If multiple custom HAMs are defined, their relative order is resolved by using

@Priority.

-

-

OpenIdAuthenticationMechanismDefinition -

CustomFormAuthenticationMechanismDefinition -

FormAuthenticationMechanismDefinition -

BasicAuthenticationMechanismDefinition

Given this ordering, the Custom HAM is always selected in the authentication workflow if all five HAM types are defined in the application.

A developer must provide a custom implementation of the HttpAuthenticationMechanismHandler interface (a "custom HAM handler") if the internal HAM handler does not meet their requirements. A custom handler always takes precedence over the internal HAM handler, allowing any tailored algorithm to select a single HAM from multiple available mechanisms. Additional information about creating and using a custom HAM handler is provided in a later section.

|

Qualifiers

HAMs - whether defined through annotations or as custom defined - can also include an optional class‑level qualifier to simplify HAM injection into a custom HAM handler. For example, if you want to define qualified HAMs, you would first declare qualifier interfaces such as:

@Qualifier

@Retention(RUNTIME)

@Target({TYPE, METHOD, FIELD, PARAMETER})

public @interface Admin {

}(Not shown is the qualifier definitions for User, Fallback which follow an identical pattern, and are used below).

Then, import and use the qualifiers in your in-built or custom HAM specifications, such as:

@CustomFormAuthenticationMechanismDefinition(<existing details not shown>, qualifiers={Admin.class})

@BasicAuthenticationMechanismDefinition(<existing details not shown>, qualifiers={User.class})and

@ApplicationScoped

@Fallback // add custom qualifier to be used during injection

public class CustomHAM implements HttpAuthenticationMechanism {

@Override

public AuthenticationStatus validateRequest (. . .) {

. . .

}

}The three HAMs defined in the example are available for injection into a custom HAM handler based on their qualifier names. This scenario represents the typical use case for applications that define multiple HAMs.

| If qualifiers are specified in any of the built-in HAM definitions, a custom HAM handler must be provided; otherwise, an error is raised. This requirement comes directly from the Jakarta Security specification. Details about creating and using a custom HAM handler are explained in the next section. |

To implement a custom HAM handler, define a public class that is annotated with @ApplicationScoped that implements the HttpAuthenticationMechanismHandler interface. Also, inject the qualified HAMs by using standard CDI syntax, as shown in the following section:

@Default

@ApplicationScoped

public class CustomHAMHandler implements HttpAuthenticationMechanismHandler {

@Inject @Admin // this will be the FormHAM

private HttpAuthenticationMechanism adminHAM;

@Inject @User // this will be the BasicHAM

private HttpAuthenticationMechanism userHAM;

@Inject @Fallback // this will be the Custom HAM

private HttpAuthenticationMechanism fallbackHAM;

public AuthenticationStatus validateRequest(

HttpServletRequest request,

HttpServletResponse response,

HttpMessageContext context

) throws AuthenticationException {

String path = request.getRequestURI();

// route to appropriate mechanism based on path, default to my-realm

if (path.startsWith("/admin")) { // FormHAM

return adminHAM.validateRequest(request, response, context);

} else if (path.startsWith("/user")) { // BasicHAM

return userHAM.validateRequest(request, response, context);

} else { // Custom HAM

return fallbackHAM.validateRequest(request, response, context);

}

}This custom HAM handler takes priority over the internal HAM handler, allowing a different prioritisation algorithm to be implemented.

getAllDeclaredCallerRoles()

To use the new SecurityContext method, inject the SecurityContext implementation into your application and call the method directly, as shown in the following section:

@Inject

private SecurityContext securityContext;

. . .

Set<String> allDeclaredCallerRoles = securityContext.getAllDeclaredCallerRoles();

System.out.println("All declared caller roles for caller ["

+ securityContext.getCallerPrincipal().getName()

+ "] are "

+ allDeclaredCallerRoles.toString());Learn more

Further information can be found in the Jakarta Security Specification 4.0:

For more information about the securityUtility command, see the WAS Liberty base topic.

Beta support for Java 26

Java 26 is a recent Java release that introduces new features and enhancements over earlier versions that can be useful to review. This release is not a long-term support (LTS) release.

There are 10 new features (JEPs) in Java 26. Five are test features and five are fully delivered.

Test Features:

Delivered Features:

A new change JEP 500 ("Prepare to Make Final Mean Final") in Java 26 starts enforcing true immutability of final fields by restricting their mutation when using deep reflection. In Java 26, such mutations still work but trigger runtime warnings by default, preparing developers for stricter enforcement. Future releases would likely throw exceptions instead, making the final truly nonmutable.

Developers can opt in early to this stricter behavior by using a JVM flag (for example, --illegal-final-field-mutation=deny) to detect issues sooner.

This change improves program correctness, security, and JVM optimizations.

Take advantage of these changes now to gain more time to evaluate how your applications and microservices behave on Java 26.

Get started today by downloading the latest release of IBM Semeru Runtime 26 or Temurin 26, then download and install the Open Liberty 26.0.0.4-beta. Update your Liberty server’s server.env file with JAVA_HOME set to your Java 26 installation directory and start testing.

For more information on Java 26, see the Java 26 release notes page and API Javadoc page.

Updates to mcpServer-1.0

The Model Context Protocol (MCP) is an open standard that enables AI applications to access real-time information from external sources. The Liberty MCP Server feature mcpServer-1.0 allows developers to expose the business logic of their applications, allowing it to be integrated into agentic AI workflows.

This beta release of Open Liberty includes updates to the mcpServer-1.0 feature including dynamic registration of tools and support for version 2025-11-25 of the protocol.

Dynamically register MCP tools

Tools can now be registered dynamically through an API. This capability allows the set of available tools on the server to be adjusted based on configuration or environment.

Tools can be registered by injecting ToolManager and calling its methods to add, remove, and list the available tools on the server. The full Javadoc for ToolManager can be found within the Liberty beta in dev/api/ibm/javadoc/io.openliberty.mcp_1.0-javadoc.zip.

Tools can be registered when the application starts through the CDI Startup event. See the following example where the Startup event is used to register a weather forecast tool only if a WeatherClient bean is available.

@Inject

ToolManager toolManager;

@Inject

Instance<WeatherClient> weatherClientInstance;

private void createWeatherTool(@Observes Startup startup) {

if (weatherClientInstance.isResolvable()) {

WeatherClient weatherClient = weatherClientInstance.get();

toolManager.newTool("getForecast")

.setTitle("Weather Forecast Provider")

.setDescription("Get weather forecast for a location")

.addArgument("latitude", "Latitude of the location", true, Double.class)

.addArgument("longitude", "Longitude of the location", true, Double.class)

.setHandler(toolArguments -> {

Double latitude = (Double) toolArguments.args().get("latitude");

Double longitude = (Double) toolArguments.args().get("longitude");

String result = weatherClient.getForecast(

latitude,

longitude,

4,

"temperature_2m,snowfall,rain,precipitation,precipitation_probability");

return ToolResponse.success(result);

})

.register();

}

}Tool registration starts with newTool(), then the information about the tool is added, including its title, description, and arguments. The handler supplies the code to run when the tool is called. It has one parameter, which is a ToolArguments object, which provides access to the arguments and other information about the tool call request. The handler must always create and return a ToolResponse.

Accept the 2025-11-25 MCP protocol

The mcpServer-1.0 feature now accepts version 2025-11-25 of the Model Context Protocol (MCP), although it does not support any new features introduced in this version of the protocol.

Supporting MCP 2025-11-25 introduces two notable behavior changes:

-

Tool name restrictions: Tool names are now limited to ASCII letters ('A–Z, a–z'), digits ('0–9'), and the underscore ('_'), hyphen ('-'), and dot ('.') characters.

-

Improved handling of invalid tool arguments: Invalid arguments passed when invoking a tool are now treated as tool execution errors rather than protocol errors. This change allows the LLM to receive feedback that the tool was called incorrectly and to retry with corrected arguments.

Paginated results

The result of a tools/list call is now paginated with a page size of 20. This means that if there are more than 20 tools deployed on the server, clients make several small calls to retrieve the list of tools, rather than one large call.

Bug fixes

-

During cancellation of a tool call, we check that both the session id and the authenticated user match the session id and the user that made the tool call. Previously only the session id was checked.

-

Messages that are returned to the MCP client no longer contain Open Liberty message codes.

-

Structured content is only returned when client is using protocol version

2025-06-18or later.

Further information

-

For more information about the

mcpServer-1.0feature and how to get started using the beta, see our dedicated blog post. -

For more information about the Model Context Protocol, see modelcontextprotocol.io

Preview of some Jakarta Data 1.1 M2 capability

Previews some new capability at the Jakarta Data 1.1 Milestone 2 level: Constraint subtype parameters for repository methods that constrain to repository @Find operations and limited use of Restriction with repository @Find operations. Also included from the prior beta are: retrieving a subset/projection of entity attributes and the @Is annotation.

Previously, parameter-based @Find reposotory methods could filter results only using equality conditions. This limitation has now been removed, allowing additional filtering options to be defined.

Filtering can now be specified in several ways. One approach is to define the repository method parameter as a Constraint subtype, or to indicate the constraint subtype by using the @Is annotation. Alternatively, a repository @Find method can include a special parameter of type Restriction, allowing the application to supply one or more restrictions dynamically at runtime when the method is invoked.

In Jakarta Data, you define simple Java objects called entities to represent data, and interfaces called repositories to define data operations. You inject a repository into your application and use it. The implementation of the repository is automatically provided.

Start by defining an entity class that corresponds to your data. With relational databases, the entity class corresponds to a database table and the entity properties (public methods and fields of the entity class) generally correspond to the columns of the table. An entity class can be:

-

annotated with

jakarta.persistence.Entityand related annotations from Jakarta Persistence -

a Java class without entity annotations, in which case the primary key is inferred from an entity property named

idor ending withIdand an entity property namedversiondesignates an automatically incremented version column.

Here’s a simple entity:

@Entity

public class Product {

@Id

public long id;

public String name;

public double price;

public double weight;

}After you define the entity to represent the data, it is usually helpful to have your IDE generate a static metamodel class for it. By convention, static metamodel classes begin with the underscore ('_') character, followed by the entity name.

Because this beta is available well before the release of Jakarta Data 1.1, IDE support for this generation cannot be expected yet. However, a static metamodel class can be provided in the same form that an IDE would generate for the Product entity.

@StaticMetamodel(Product.class)

public interface _Product {

String ID = "id";

String NAME = "name";

String PRICE = "price";

String WEIGHT = "weight";

NumericAttribute<Product, Long> id = NumericAttribute.of(

Product.class, ID, long.class);

TextAttribute<Product> name = TextAttribute.of(

Product.class, NAME);

NumericAttribute<Product, Double> price = NumericAttribute.of(

Product.class, PRICE, double.class);

NumericAttribute<Product, Double> weight = NumericAttribute.of(

Product.class, WEIGHT, double.class);

}The first half of the static metamodel class includes constants for each of the entity attribute names so that you don’t need to otherwise hardcode string values into your application. The second half of the static metamodel class provides a special instance for each entity attribute, from which you can build restrictions and sorting to apply to queries at run time. This capability enables many powerful operations with repository queries, but those features are deferred to a future beta.

A repository defines operations that are related to the Product entity. Your repository interface can inherit from built-in interfaces such as BasicRepository and CrudRepository to gain various general-purpose repository methods for inserting, updating, deleting, and querying for entities. In addition to these capabilities, the repository can define custom operations by using the static metamodel and annotations such as @Find or @Delete.

@Repository(dataStore = "java:app/jdbc/my-example-data")

public interface Products extends CrudRepository<Product, Long> {

// Filtering is pre-defined at development time by the @Is annotation,

@Find

@OrderBy(_Product.PRICE)

@OrderBy(_Product.NAME)

List<Product> costingUpTo(@By(_Product.PRICE) @Is(AtMost.class) double maxPrice);

// Constraint types, such as Like, can also pre-define filtering.

// Allow additional filtering at run time by including a Restriction,

@Find

Page<Product> named(@By(_Product.Name) Like namePattern,

Restriction<Product> filter,

Order<Product> sorting,

PageRequest pageRequest);

// Retrieve a single entity attribute, identified by @Select,

@Find

@Select(_Product.PRICE)

Optional<Double> priceOf(@By(_Product.ID) long productNum);

// Retrieve multiple entity attributes as a Java record,

@Find

@Select(_Product.WEIGHT)

@Select(_Product.NAME)

Optional<WeightInfo> weightAndNameOf(@By(_Product.ID) long productNum);

static record WeightInfo(double itemWeight, String itemName) {}

}Here is an example of using the repository and static metamodel:

@DataSourceDefinition(name = "java:app/jdbc/my-example-data",

className = "org.postgresql.xa.PGXADataSource",

databaseName = "ExampleDB",

serverName = "localhost",

portNumber = 5432,

user = "${example.database.user}",

password = "${example.database.password}")

public class MyServlet extends HttpServlet {

@Inject

Products products;

protected void doGet(HttpServletRequest req, HttpServletResponse resp)

throws ServletException, IOException {

// Insert:

Product prod = ...

prod = products.insert(prod);

// Filter by supplying values only:

List<Product> found = products.costingUpTo(50.0, "%keyboard%");

// Compute filtering at run time,

Page<Product> page1 = products.named(

Like.suffix("printer"),

Restrict.all(_Product.price.times(taxRate).plus(shipping).lessThan(250.0),

_Product.weight.times(kgToPounds).between(10.0, 20.0)),

Order.by(_Product.price.desc(),

_Product.name.asc(),

_Product.id.asc()),

PageRequest.ofSize(10));

// Find one entity attribute: