J2ME, bluetooth, speech recognition, automation ChatGPT. Also checkout the book: javachatgptbook.comis available for download.

<featureManager>

<feature>restfulWS-4.0</feature>

</featureManager>Want to explore the potential of ARM (Advanced RISC Machine) architecture for your Jakarta EE workload? Our latest webinar 'Jakarta EE with ARM: Enhance Performance and Efficiency in the Cloud' is now available to watch on-demand on https://www.crowdcast.io/c/jakarta-ee-with-arm-july-2024

Want to explore the potential of ARM (Advanced RISC Machine) architecture for your Jakarta EE workload? Our latest webinar 'Jakarta EE with ARM: Enhance Performance and Efficiency in the Cloud' is now available to watch on-demand on https://www.crowdcast.io/c/jakarta-ee-with-arm-july-2024

Subscribe to airhacks.fm podcast via: spotify| iTunes| RSS

J2ME, bluetooth, speech recognition, automation ChatGPT. Also checkout the book: javachatgptbook.comis available for download.

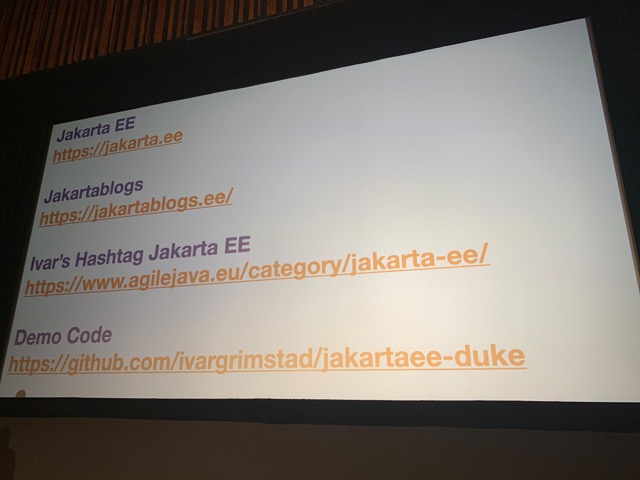

Welcome to issue number two hundred and thirty-eight of Hashtag Jakarta EE!

I have just arrived back home from JConf Dominica 2024, and have a couple of hours to pack my bags before heading out again for JCrete 2024. Busy times for a developer advocate, but so incredibly great to be able to connect with the Java communities all over the world.

(I don’t know if it was pure luck, or if the stars were aligned just right for me this time, but as it turned out, the only two days I was on the ground this week were the two days with Blue Screen of Death for the airlines.)

The first release candidate of Jakarta EE 11 will be published shortly. There are a couple of the specifications that have released service releases of their API artifacts, so we will gather these and release an RC1 of the Jakarta EE 11 APIs.

All the Jakarta EE 11 XML Schemas are publicly available at https://jakarta.ee/schemas/. Please check them out and let us know if you find something that needs to be corrected before the release is Final.

Another way of providing input, AND possibly winning a FREE T-shirt is to complete the 2024 Cloud Native Java Technical Survey.

A Java conference in the Dominican Republic sounds too good to be true. Well, I can tell you that it is a thing, and it is awesome! JConf Dominicana, The Caribbean Java Technologies Conference is organized by Java Dominicano, the Dominican Java User Group. It is a one-day conference with a half day of workshops the day before.

I hosted a 4-hour Jakarta EE workshop together with Eudris Cabrera. He provided Spanish translations of all the instructions for the participants and explained in Spanish where my English came short. We had a great time, and it looks like the participants enjoyed it as well if you judge from the happy faces in this photo.

Jakarta EE was very well represented at JConfDominicana this year. In addition to the workshop, Shabnam did a keynote titled Empowering Innovation: The Open Source Odyssey of Contribution and Collaboration with Jakarta EE.

The day before the conference, we went out to explore the city and ended up visiting a cigar factory where they had free tours of the facilities. It is amazing to see how cigars are hand-made this way. Our clothes will need a turn in the washer after the visit as almost everyone in there was smoking cigars while working.

It is always such a great experience to speak at conferences like JConfDominica that are organized by and for the local Java Community. I encourage everyone who employs Java developers anywhere in the world to support the local Java community.

Subscribe to airhacks.fm podcast via: spotify| iTunes| RSS

Project Panama, Vector API, Value Types, machine learning, benefits of nullability in API design and new datatypes like e.g. Float16 in Javais available for download.

In this tutorial, we will learn how to map your Data Transfer Objects (DTO) using the MapStruct framework and integrate it into a Jakarta EE application. Understanding DTO Objects DTO Objects are used to decouple the database model from the view that is transferred to the client. They are intended to be immutable objects, used ... Read more

The post How to Map Your DTO Objects with MapStruct appeared first on Mastertheboss.

Ready to explore the potential of ARM (Advanced RISC Machine) architecture for your Jakarta EE workload? Join our next free webinar:

Register: https://www.crowdcast.io/c/jakarta-ee-with-arm-july-2024

Ready to explore the potential of ARM (Advanced RISC Machine) architecture for your Jakarta EE workload? Join our next free webinar:

Register: https://www.crowdcast.io/c/jakarta-ee-with-arm-july-2024

The post AI Glossary for Java Developers appeared first on Thorben Janssen.

Most Java developers encounter problems when learning how to integrate AI into their applications using SpringAI, Langchain4J, or some other library. AI introduces many new terms, acronyms, and techniques you must understand to build a good system. I ran into the same issue when I started learning about AI. In this article, I did my...

The post AI Glossary for Java Developers appeared first on Thorben Janssen.

The 24.0.0.7-beta release introduces enhancements to Jakarta RESTful Web Services 4.0, including new API methods and media type values. It also includes enhanced HTTP request tracking and the beta release of the Audit 2.0 feature, which creates REST Handler records only when a REST Handler application is configured.

The Open Liberty 24.0.0.7-beta includes the following beta features (along with all GA features):

See also previous Open Liberty beta blog posts.

Jakarta RESTful Web Services provides a foundational API to develop web services based on the REST architectural pattern. The Jakarta Restful Web Services 4.0 update introduces several enhancements to make web service development more efficient and effective.

The new features, restfulWS-4.0 and restfulWSClient-4.0, are primarily aimed at application developers. This release brings incremental improvements to Jakarta RESTful Web Services. It provides the following new convenience API methods:

The containsHeaderString() method is added to ContainerRequestContext, ContainerResponseContext, and HttpHeaders to help determine whether a header contains a specified value.

The UriInfo.getMatchedResourceTemplate() method is a new addition that returns a full template for a request.

New MediaType values to handle specific media types are introduced, including APPLICATION_JSON_PATCH_JSON and APPLICATION_MERGE_PATCH_JSON.

These updates streamline the process of developing RESTful services. To implement these new features, add the following code to your server.xml file.

For the RESTful web services feature:

<featureManager>

<feature>restfulWS-4.0</feature>

</featureManager>For the RESTful web services client feature:

<featureManager>

<feature>restfulWSClient-4.0</feature>

</featureManager>For more information, see the Jakarta RESTful Web Services 4.0 specification and the Javadoc.

Open Liberty introduces a significant update that enhances the tracking of HTTP connections that are made to the server. With the Monitor-1.0 feature (monitor-1.0) and any Jakarta EE 10 feature that uses the servlet engine, Open Liberty can now record detailed information about HTTP requests into an HttpStatsMXBean. This includes the request method, response status, duration, HTTP route, and other attributes that align with the Open Telemetry HTTP metric semantic convention.

If the mpMetrics-5.0 or mpMetrics-5.1 feature is also active, Open Liberty creates corresponding HTTP metrics, such as http.server.request.duration, using a Timer type metric. To use these metrics effectively, configure histogram buckets explicitly for Timer and Histogram type metrics by using the MicroProfile Metrics feature. For more details, see the mp.metrics.distribution.timer.buckets property on the MicroProfile Config Properties page.

This update ensures that operations teams responsible for monitoring can now have a detailed and comprehensive view of HTTP requests, enhancing the monitoring and management capabilities within Open Liberty environments.

Previously, Open Liberty tracked servlet and JAX-RS/RESTful resource requests to the server as separate MBeans (servlet MBeans and REST MBeans). This data might then be forwarded to the mpMetrics feature to create corresponding metrics. Monitoring tools like Prometheus and Grafana could visualize this metric data to showcase server performance and responsiveness.

With the new update, HTTP connections are now tracked more comprehensively, combining request details into a unified HttpStatsMXBean. This approach simplifies monitoring and provides a more cohesive view of server performance, making it easier for operations teams to ensure optimal server responsiveness.

This enhancement is an auto-feature that activates with monitor-1.0 and any feature that uses the servlet engine currently supporting Jakarta EE 10 features like servlet-6.0, pages-3.1, and restfulWS-3.1. It also supports registering HTTP metrics with mpMetrics-5.x features. To enable this function, add the following to your server.xml file:

<featureManager>

<feature>monitor-1.0</feature>

<feature>servlet-6.0</feature>

<feature>mpMetrics-5.0</feature>

</featureManager>For detailed configuration, see the MicroProfile Config Properties page.

For more information about the HTTP semantic convention used, see the Open Telemetry HTTP semantic convention page.

Jakarta Faces is a Model-View-Controller (MVC) framework for building web applications, offering many convenient features, such as state management and input validation. The Jakarta Faces 4.1 (faces-4.1) update introduces a range of incremental improvements to enhance the developer experience. These enhancements include increased use of generics in FacesMessages and other locations, as well as improved CDI integration by requiring events for @Initialized, @BeforeDestroyed, and @Destroyed for built-in scopes like FlowScoped and ViewScoped. The current Flow can also now be injected into a backing bean. Finally, a new UUIDConverter is added to the API, which simplifies the handling of UUIDs.

This update also includes some deprecations and removals. The composite:extension tag and references to the Security Manager are removed, and the full state saving mechanism is deprecated. To enable the Jakarta Faces 4.1 feature in your application, add the following to your server.xml file:

<featureManager>

<feature>faces-4.1</feature>

</featureManager>For more information, see the Jakarta Faces 4.1 specification and the Javadoc.

The Audit 2.0 feature (audit-2.0) is now in beta release. The feature is designed for users that are not using REST Handler applications.

It provides the same audit records as the Audit 1.0 feature (audit-1.0) but it does not generate records for REST Handler applications.

Customers that need to keep audit records for REST Handler applications must continue to use the Audit 1.0 feature.

To enable the Audit 2.0 feature in your application, add the following to your server.xml file:

<featureManager>

<feature>audit-2.0</feature>

</featureManager>To try out these features, update your build tools to pull the Open Liberty All Beta Features package instead of the main release. The beta works with Java SE 21, Java SE 17, Java SE 11, and Java SE 8.

If you’re using Maven, you can install the All Beta Features package using:

<plugin>

<groupId>io.openliberty.tools</groupId>

<artifactId>liberty-maven-plugin</artifactId>

<version>3.10.3</version>

<configuration>

<runtimeArtifact>

<groupId>io.openliberty.beta</groupId>

<artifactId>openliberty-runtime</artifactId>

<version>24.0.0.7-beta</version>

<type>zip</type>

</runtimeArtifact>

</configuration>

</plugin>You must also add dependencies to your pom.xml file for the beta version of the APIs that are associated with the beta features that you want to try. For example, the following block adds dependencies for two example beta APIs:

<dependency>

<groupId>org.example.spec</groupId>

<artifactId>exampleApi</artifactId>

<version>7.0</version>

<type>pom</type>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>example.platform</groupId>

<artifactId>example.example-api</artifactId>

<version>11.0.0</version>

<scope>provided</scope>

</dependency>Or for Gradle:

buildscript {

repositories {

mavenCentral()

}

dependencies {

classpath 'io.openliberty.tools:liberty-gradle-plugin:3.8.3'

}

}

apply plugin: 'liberty'

dependencies {

libertyRuntime group: 'io.openliberty.beta', name: 'openliberty-runtime', version: '[24.0.0.7-beta,)'

}Or if you’re using container images:

FROM icr.io/appcafe/open-liberty:betaOr take a look at our Downloads page.

If you’re using IntelliJ IDEA, Visual Studio Code or Eclipse IDE, you can also take advantage of our open source Liberty developer tools to enable effective development, testing, debugging and application management all from within your IDE.

For more information on using a beta release, refer to the Installing Open Liberty beta releases documentation.

Let us know what you think on our mailing list. If you hit a problem, post a question on StackOverflow. If you hit a bug, please raise an issue.

The post How to define a repository with Jakarta Data and Hibernate appeared first on Thorben Janssen.

Repositories are a commonly used pattern for persistence layers. They abstract from the underlying data store or persistence framework and hide the technical details from your business code. Using Jakarta Data, you can easily define such a repository as an interface, and you’ll get an implementation of it based on Jakarta Persistence or Jakarta NoSQL....

The post How to define a repository with Jakarta Data and Hibernate appeared first on Thorben Janssen.

The Generational Z Garbage Collector (GenZGC) in JDK 21 represents a significant evolution in Java’s approach to garbage collection, aiming to enhance application performance through more efficient memory management. This advancement builds upon the strengths of the Z Garbage Collector (ZGC) by introducing a generational approach to garbage collection within the JVM.

Generational Hypothesis: Generational ZGC leverages the “weak generational hypothesis,” which posits that most objects die young. By dividing the heap into young and old regions, GenZGC can focus its efforts on the young region where most objects become unreachable, thereby optimizing garbage collection efficiency and reducing CPU overhead

Heap Division and Collection Cycles: The heap is divided into two logical parts: the young generation and the old generation. Newly allocated objects are placed in the young generation, which is frequently scanned for garbage collection. Objects that survive several collection cycles are then promoted to the old generation, which is scanned less often. This division allows for more frequent collection of short-lived objects while reducing the overhead of collecting long-lived objects.

Throughput and Latency Internal performance tests have shown that Generational ZGC offers about a 10% improvement in throughput over its single-generation predecessors in both JDK 17 and JDK 21, despite a slight regression in average latency measured in microseconds. However, the most notable improvement is observed in maximum pause times, with a 10-20% improvement in P99 pause times. This reduction in pause times significantly enhances the predictability and responsiveness of applications, particularly those requiring low latency.

A crucial advantage of Generational ZGC is its ability to mitigate allocation stalls, which occur when the rate of object allocation outpaces the garbage collector’s ability to reclaim memory. This capability is particularly beneficial in high-throughput applications, such as those using Apache Cassandra, where Generational ZGC maintains performance stability even under high concurrency levels.

Transition and Adoption: While JDK 21 introduces Generational ZGC, single-generation ZGC remains the default for now. Developers can opt into using Generational ZGC through JVM arguments (`-XX:+UseZGC -XX:+ZGenerational`). The plan is for Generational ZGC to eventually become the default, with single-generation ZGC being deprecated and removed. This phased approach allows developers to gradually adapt to the new system.

Diagnostic and Profiling Tools: For those evaluating or transitioning to Generational ZGC, tools like GC logging and JDK Flight Recorder (JFR) offer valuable insights into GC behavior and performance. GC logging, accessible via the `-Xlog` argument, and JFR data can be analyzed in JDK Mission Control (JMC) to assess garbage collection behavior and application performance implications.

Generational ZGC represents a significant step forward in Java’s garbage collection technology, offering improved throughput, reduced pause times, and enhanced overall application performance. Its design reflects a deep understanding of application memory management needs, particularly the efficient collection of short-lived objects. As Java applications continue to grow in complexity and scale, the adoption of Generational ZGC could be a pivotal factor in achieving the performance goals of modern, high-demand applications.

The transition from Java 17 to Java 21 heralds a new era of Java development, characterized by significant improvements in performance, security, and developer-friendly features. The API changes and enhancements discussed above are just the tip of the iceberg, with Java 21 offering a wealth of other features and improvements designed to cater to the evolving needs of modern application development. As developers, embracing Java 21 and leveraging its new features and improvements can significantly impact the efficiency, performance, and security of Java applications. Whether it’s through the enhanced I/O capabilities, improved serialization exception handling, or the new Unicode support in the `Character` class, Java 21 offers a compelling upgrade path from Java 17, promising to enhance the Java ecosystem for years to come. In conclusion, the evolution from Java 17 to Java 21 is a testament to the ongoing commitment to advancing Java as a language and platform. By exploring and adopting these new features, developers can ensure their Java applications remain cutting-edge, secure, and performant in the face of future challenges.

As we all know, Java is a constantly evolving programming language. With each new release, we get a plethora of new features, enhancements, and sometimes, a few deprecations. In this blog post, we will analyse the significant differences between Java 17 and Java 21 API. We will navigate through the changes, with a focus on deprecated features, new library additions, security enhancements, and performance improvements.

Released on September 19, 2023, Java 21 is celebrated for its comprehensive set of specifications that define the behaviour of the Java language, API, and virtual machine. It represents a Long Term Support (LTS) version, ensuring extended updates and support from various vendors, making it a pivotal release for developers and organizations alike.

One of the key aspects of Java’s evolution is the deprecation of features that are no longer relevant or have been replaced by more efficient alternatives. In the transition from Java 17 to Java 21, several features, methods and classes have been deprecated or removed. These are the following:

ObjectOutputStream.PutFieldwrite(ObjectOutput) – Marked for removalEnum.finalize() – Deprecated and marked for removalRuntime.exec(String) – DeprecatedRuntime.exec(String, String[]) – DeprecatedRuntime.exec(String, String[], File) – DeprecatedRuntime.runFinalization() – Deprecated and marked for removalSystem.runFinalization() – Deprecated and marked for removalThreadDeath – Deprecated and marked for removalThreadGroup.allowThreadSuspension(boolean) – RemovedThread.countStackFrames() – RemovedThread.getId() – DeprecatedThread.resume() – RemovedThread.stop() – Marked for removalThread.suspend() – RemovedURL(String) – DeprecatedURL(String, String, String) – DeprecatedURL(String, String, int, String) – DeprecatedURL(String, String, int, String, URLStreamHandler) – DeprecatedURL(URL, String) – DeprecatedURL(URL, String, URLStreamHandler) – Deprecatedjava.security.spec.PSSParameterSpec.DEFAULT – DeprecatedPSSParameterSpec(int) – DeprecatedMemoryMXBean.getObjectPendingFinalizationCount() – DeprecatedAlso the following Classes were removed

ClassSpecializer.FactoryClassSpecializer.SpeciesDataCompilerFdLibm.CbrtFdLibm.HypotFdLibm.PowMLetContentMLetPrivateMLetMLetMBeanjavax.management.remote.rmi.RMIConnector.getMBeanServerConnection(Subject)javax.management.remote.RMIIIOPServerImpljavax.management.remote.JMXConnector.getMBeanServerConnection(Subject)ProcessBuilder processBuilder = new ProcessBuilder(command, arguments); Process process = processBuilder.start(); // Handle process output and errors as needed

String threadName = Thread.currentThread().getName(); // Get current thread name boolean isThreadAlive = Thread.currentThread().isAlive(); // Check if thread is alive

URL url = new URL("https://example.com/", MyClassLoader.class.getClassLoader());Java 21 introduces several new features

Transitioning from `final` to `sealed`, this change in the Console class introduces more controlled subclassing, allowing developers to extend functionality within a restricted hierarchy

The introduction of the `charset()` method in `PrintStream` allows developers to ascertain the charset used by the PrintStream, enhancing support for internationalization.

Let’s see some specific usage scenarios where the new features and API changes introduced in Java 21 can be particularly beneficial. These scenarios will illustrate how developers can leverage these enhancements to address real-world development challenges.

Scenario: A developer is working on a network application that requires the efficient transfer of large files from a server to multiple clients. The application needs to maximize throughput and minimize latency.

Java 21 Solution: Utilize the `transferTo(OutputStream)` method in classes such as `BufferedInputStream` for efficient data transfer. This method allows for direct transfer of bytes between streams, reducing the overhead of copying data to an intermediate buffer.

import java.io.*;

import java.net.ServerSocket;

import java.net.Socket;

public class FileServer {

public static void main(String[] args) throws IOException {

try (ServerSocket serverSocket = new ServerSocket( /* port number */ );

Socket clientSocket = serverSocket.accept();

BufferedInputStream fileInput = new BufferedInputStream(new FileInputStream("largeFile.bin"));

OutputStream clientOutput = clientSocket.getOutputStream()) {

fileInput.transferTo(clientOutput);

System.out.println("File transferred successfully to client.");

} catch (IOException e) {

e.printStackTrace();

}

}

}

Benefits: This approach minimizes the I/O overhead and system resource utilization, leading to faster file transfers and improved application performance.

Scenario: A development team is building a custom framework that relies heavily on reflection for dynamic operation. They need a way to debug and validate the access levels of various members (methods, fields, etc.) at runtime to ensure correct behavior.

Java 21 Solution: Use the `accessFlags()` method from reflective classes (`Method`, `Field`) to get detailed access level information. This can help in logging, debugging, or runtime validation of application components.

import java.lang.reflect.Method;

import java.lang.reflect.Modifier;

public class MyClassInspector {

public static void main(String[] args) throws ClassNotFoundException {

Class<?> clazz = Class.forName("MyClass"); // Replace "MyClass" with the actual class name

Method[] methods = clazz.getDeclaredMethods();

for (Method method : methods) {

int flags = method.getModifiers();

System.out.printf("Method: %s, Access Flags: %s%n", method.getName(), Modifier.toString(flags));

}

}

}

Benefits: This capability enhances debugging and introspection, allowing developers to programmatically check and handle access levels, leading to more robust and secure applications.

Scenario: A social media platform aims to enhance its text processing capabilities to better support emojis, ensuring that user-generated content including emojis is correctly validated and displayed across different devices.

Java 21 Solution: Leverage the expanded Unicode and emoji support in the `Character` class to identify, validate, and process emojis within user content.

String userComment = "Hello world!";

userComment.codePoints().forEach(cp -> {

if (Character.isEmoji(cp)) {

System.out.printf("Found an emoji: %s%n", new String(Character.toChars(cp)));

}

});

Benefits: By accurately processing emojis, the platform can enhance user experience, ensuring correct display and interaction with emojis across various devices and locales, thereby supporting global user engagement. ### Conclusion The scenarios outlined above demonstrate the practical application of Java 21’s new features in addressing common development challenges. Whether it’s through more efficient data handling, improved debugging and validation mechanisms, or enhanced support for global character sets and emojis, Java 21 provides developers with powerful tools to build more efficient, secure, and user-friendly applications. As Java continues to evolve, staying abreast of these changes and understanding how to apply them in real-world contexts is crucial for developers looking to maximize their productivity and leverage the full power of the Java platform.

The Generational Z Garbage Collector (GenZGC) in JDK 21 represents a significant evolution in Java’s approach to garbage collection. It builds upon the Z Garbage Collector (ZGC) by introducing a generational approach, aiming to enhance application performance through more efficient memory management.

Generational ZGC leverages the “weak generational hypothesis,” which suggests that most objects die young. By dividing the heap into young and old regions, GenZGC can focus on the young region where most objects become unreachable, thereby optimizing garbage collection efficiency and reducing CPU overhead. This division allows for more frequent collection of short-lived objects while reducing the overhead of collecting long-lived objects. Internal performance tests have shown that Generational ZGC offers about a 10% improvement in throughput over its single-generation predecessors. A crucial advantage is its ability to mitigate allocation stalls, which significantly benefits high-throughput applications.

While JDK 21 introduces Generational ZGC, single-generation ZGC remains the default for now. Developers can opt into using Generational ZGC through JVM arguments. The plan is for Generational ZGC to eventually become the default. Tools like GC logging and JDK Flight Recorder (JFR) offer valuable insights into GC behavior and performance for those evaluating or transitioning to Generational ZGC.

Generational ZGC represents a significant step forward in Java’s garbage collection technology, offering improved throughput, reduced pause times, and enhanced overall application performance. Its design reflects a deep understanding of application memory management needs. As Java applications continue to grow in complexity and scale, the adoption of Generational ZGC could be a pivotal factor in achieving the performance goals of modern, high-demand applications.

The transition from Java 17 to Java 21 heralds a new era of Java development, characterized by significant improvements in performance, security, and developer-friendly features. The API changes and enhancements discussed above are just the tip of the iceberg, with Java 21 offering a wealth of other features and improvements designed to cater to the evolving needs of modern application development. As developers, embracing Java 21 and leveraging its new features and improvements can significantly impact the efficiency, performance, and security of Java applications. Whether it’s through the enhanced I/O capabilities, improved serialization exception handling, or the new Unicode support in the `Character` class, Java 21 offers a compelling upgrade path from Java 17, promising to enhance the Java ecosystem for years to come. In conclusion, the evolution from Java 17 to Java 21 is a testament to the ongoing commitment to advancing Java as a language and platform. By exploring and adopting these new features, developers can ensure their Java applications remain cutting-edge, secure, and performant in the face of future challenges.

In this article, we will provide an overview of the upcoming Jakarta RESTful Web Services 4.0 features. We will show how to test the latest version of this API on WildFly and how to run some examples with it. What’s New in Jakarta REST 4.0: Key Features and Enhancements The latest Jakarta REST 4.0 release ... Read more

The post Getting started with Jakarta REST Services 4.0 appeared first on Mastertheboss.

The 24.0.0.6-beta release includes previews of the Jakarta Validation and Jakarta Data implementations, both of which are part of Jakarta EE 11. The release also includes enhancements for histogram and timer metrics in MicroProfile 3.0 and 4.0 and InstantOn support for distributed HTTP session caching.

The Open Liberty 24.0.0.6-beta includes the following beta features (along with all GA features):

See also previous Open Liberty beta blog posts.

Jakarta Validation provides a mechanism to validate constraints that are imposed on Java objects by using annotations. The most noticeable change in version 3.1 is the name change, from Jakarta Bean Validation to Jakarta Validation. This version also includes explicit support for validating Java record classes, which were added in Java 16. Records reduce much of the boilerplate code in simple data classes, and pair nicely with Jakarta Validation.

The following examples demonstrate the simplicity of defining a Java record with Jakarta Validation annotations, as well as performing validation.

In this example, an Employee record is defined with two fields, empid and email.

public record Employee(@NotEmpty String empid, @Email String email) {}The @NotEmpty annotation specifies that empid cannot be an empty string, while the @Email annotation specifies that the value for email must be a properly formatted email address.

In this example, a validator is created to check the specified Employee values against the constraints that were set in the previous example.

Employee employee = new Employee("12432", "mark@example.com");

Set<ConstraintViolation<Employee>> violations = validator.validate(employee);Jakarta Data is a new Jakarta EE specification being developed in the open that aims to standardize the popular Data Repository pattern across various providers. Open Liberty 24.0.0.6-beta includes the Jakarta Data 1.0 release candidate 1, which decouples sorting from pagination, improves the static metamodel, and completes the initial definition of Jakarta Data Query Language (JDQL).

The Open Liberty beta includes a test implementation of Jakarta Data that we are using to experiment with proposed specification features. You can try out these features and provide feedback to influence the Jakarta Data 1.0 specification as it continues to be developed. The test implementation currently works with relational databases and operates by redirecting repository operations to the built-in Jakarta Persistence provider.

Jakarta Data 1.0 Release Candidate 1 completes the definition of Jakarta Data Query Language (JDQL) as a subset of Jakarta Persistence Query Language (JPQL). JDQL allows basic comparison and update operations on a single type of entity (an entity identifier variable is not used), as well as the ability to perform deletion and count operations. Find operations in JDQL consist of SELECT, FROM, WHERE, and ORDER BY clauses, all of which are optional.

Jakarta Data 1.0 Release Candidate 1 decouples sorting from pagination, allowing you to request pages of results without needing to duplicate the specification of the entity type that is queried.

The static metamodel, which allows for more type-safe usage, is streamlined in Release Candidate 1 to allow the use of interface classes with constant fields to define the metamodel.

To use these capabilities, you need an Entity and a Repository.

Start by defining an entity class that corresponds to your data. With relational databases, the entity class corresponds to a database table and the entity properties (public methods and fields of the entity class) generally correspond to the columns of the table. An entity class can be:

Annotated with jakarta.persistence.Entity and related annotations from Jakarta Persistence

A Java class without entity annotations, in which case the primary key is inferred from an entity property that is named id or ends with Id and an entity property that is named version designates an automatically incremented version column.

You define one or more repository interfaces for an entity, annotate those interfaces as @Repository, and inject them into components by using @Inject. The Jakarta Data provider supplies the implementation of the repository interface for you.

The following example shows a simple entity:

@Entity

public class Product {

@Id

public long id;

public boolean isDiscounted;

public String name;

public float price;

@Version

public long version;

}Here is a repository that defines operations relating to the entity. Your repository interface can inherit from built-in interfaces, such as BasicRepository and CrudRepository, to gain various general-purpose repository methods for inserting, updating, deleting, and querying for entities. You can add methods to further customize it.

@Repository(dataStore = "java:app/jdbc/my-example-data")

public interface Products extends BasicRepository<Product, Long> {

@Insert

Product add(Product newProduct);

// query-by-method name pattern:

List<Product> findByNameIgnoreCaseContains(String searchFor, Order<Product> orderBy);

// parameter based query that does not require -parameters because it explicitly specifies the name

@Find

Page<Product> find(@By("isDiscounted") boolean onSale,

PageRequest pageRequest,

Order <Product> orderBy);

// find query in JDQL that requires compilation with -parameters to preserve parameter names

@Query("SELECT price FROM Product WHERE id=:productId")

Optional<Float> getPrice(long productId);

// update query in JDQL:

@Query("UPDATE Product SET price = price - (?2 * price), isDiscounted = true WHERE id = ?1")

boolean discount(long productId, float discountRate);

// delete query in JDQL:

@Query("DELETE FROM Product WHERE name = ?1")

int discontinue(String name);

}Observe that the repository interface includes type parameters in PageRequest<Product> and Order<Product>. These parameters help ensure that the page request and sort criteria are for a Product entity rather than some other entity. To accomplish this, you can optionally define a static metamodel class for the entity (or various IDEs might generate one for you after the 1.0 specification is released). Here is one that can be used with the Product entity:

@StaticMetamodel(Product.class)

public interface _Product {

String ID = "id";

String IS_DISCOUNTED = "isDiscounted";

String NAME = "name";

String PRICE = "price";

String VERSION = "version";

SortableAttribute<Product> id = new SortableAttributeRecord(ID);

SortableAttribute<Product> isDiscounted = new SortableAttributeRecord(IS_DISCOUNTED);

TextAttribute<Product> name = new TextAttributeRecord(NAME);

SortableAttribute<Product> price = new SortableAttributeRecord(PRICE);

SortableAttribute<Product> version = new SortableAttributeRecord(VERSION);

}The following example shows the repository and static metamodel being used:

@DataSourceDefinition(name = "java:app/jdbc/my-example-data",

className = "org.postgresql.xa.PGXADataSource",

databaseName = "ExampleDB",

serverName = "localhost",

portNumber = 5432,

user = "${example.database.user}",

password = "${example.database.password}")

public class MyServlet extends HttpServlet {

@Inject

Products products;

protected void doGet(HttpServletRequest req, HttpServletResponse resp)

throws ServletException, IOException {

// Insert:

Product prod = ...

prod = products.add(prod);

// Find the price of one product:

price = products.getPrice(productId).orElseThrow();

// Find all, sorted:

List<Product> all = products.findByNameIgnoreCaseContains(searchFor, Order.by(

_Product.price.desc(),

_Product.name.asc(),

_Product.id.asc()));

// Find the first 20 most expensive products on sale:

Page<Product> page1 = products.find(onSale, PageRequest.ofSize(20), Order.by(

_Product.price.desc(),

_Product.name.asc(),

_Product.id.asc()));

...

}

}This release introduces MicroProfile Config properties for MicroProfile 3.0 and 4.0 that are used for configuring the statistics that are tracked and outputted by the histogram and timer metrics. These changes are already available in MicroProfile Metrics 5.1.

In previous MicroProfile Metrics 3.0 and 4.0 releases, histogram and timer metrics tracked only the following values:

Min/max recorded values

The sum of all values

The count of the recorded values

A static set of percentiles for the 50th, 75th, 95th, 98th, 99th and 99.9th percentile.

These values are output to the /metrics endpoint in Prometheus format.

The new properties can define a custom set of percentiles as well as custom set of histogram buckets for the histogram and timer metrics. Other new configuration properties can enable a default set of histogram buckets, including properties that define an upper and lower bound for the bucket set.

With these properties, you can define a semicolon-separated list of value definitions that use the following syntax:

<metric name>=<value-1>[,<value-2>…<value-n>]

Some properties can accept multiple values for a given metric name, while others can accept only a single value.

You can use an asterisk ( *) as a wildcard at the end of the metric name.

Property |

Description |

mp.metrics.distribution.percentiles |

Defines a custom set of percentiles for matching Histogram and Timer metrics to track and output. Accepts a set of integer and decimal values for a metric name pairing. Can be used to disable percentile output if no value is provided with a metric name pairing. |

mp.metrics.distribution.histogram.buckets |

Defines a custom set of (cumulative) histogram buckets for matching Histogram metrics to track and output. Accepts a set of integer and decimal values for a metric name pairing. |

mp.metrics.distribution.timer.buckets |

Defines a custom set of (cumulative) histogram buckets for matching Timer metrics to track and output. Accepts a set of decimal values with a time unit appended (that is, ms, s, m, h) for a metric name pairing. |

mp.metrics.distribution.percentiles-histogram.enabled |

Configures any matching Histogram or Timer metric to provide a large set of default histogram buckets to allow for percentile configuration with a monitoring tool. Accepts a true/false value for a metric name pairing. |

mp.metrics.distribution.histogram.max-value |

When percentile-histogram is enabled for a Timer, this property defines an upper bound for the buckets reported. Accepts a single integer or decimal value for a metric name pairing. |

mp.metrics.distribution.histogram.min-value |

When percentile-histogram is enabled for a Timer, this property defines a lower bound for the buckets reported. Accepts a single integer or decimal value for a metric name pairing. |

mp.metrics.distribution.timer.max-value |

When percentile-histogram is enabled for a Histogram, this property defines an upper bound for the buckets reported. Accepts a single decimal value with a time unit appended (that is, ms, s, m, h) for a metric name pairing. Accepts a single decimal value with a time unit appended (that is, ms, s, m, h) for a metric name pairing. |

mp.metrics.distribution.timer.min-value |

When percentile-histogram is enabled for a Histogram, this property defines a lower bound for the buckets reported. Accepts a single decimal value with a time unit appended (that is, ms, s, m, h) for a metric name pairing. |

You can define the mp.metrics.distribution.percentiles property similar to the following example.

mp.metrics.distribution.percentiles=alpha.timer=0.5,0.7,0.75,0.8;alpha.histogram=0.8,0.85,0.9,0.99;delta.*=

This property creates the alpha.timer timer metric to track and output the 50th, 70th, 75th, and 80th percentile values. The alpha.histogram histogram metric outputs the 80th, 85th, 90th, and 99th percentile values. Percentiles for any histogram or timer metric that matches with delta.* are disabled.

We’ll expand on this example and define histogram buckets for the alpha.timer timer metric by using the mp.metrics.distribution.timer.buckets property.

mp.metrics.distribution.timer.buckets=alpha.timer=100ms,200ms,1s

This configuration tells the metrics runtime to track and output the count of durations that fall within 0-100ms, 0-200ms and 0-1 seconds. This output is due to the histogram buckets working in a cumulative fashion.

The corresponding prometheus output for the alpha.timer metric at the /metrics REST endpoint is similar to the following example:

# TYPE application_alpha_timer_mean_seconds gauge

application_alpha_timer_mean_seconds 2.9700022497975187

# TYPE application_alpha_timer_max_seconds gauge

application_alpha_timer_max_seconds 5.0

# TYPE application_alpha_timer_min_seconds gauge

application_alpha_timer_min_seconds 1.0

# TYPE application_alpha_timer_stddev_seconds gauge

application_alpha_timer_stddev_seconds 1.9997750210918204

# TYPE alpha_timer_seconds histogram (1)

application_alpha_timer_seconds_bucket{le="0.1"} 0.0 (2)

application_alpha_timer_seconds_bucket{le="0.2"} 0.0 (2)

application_alpha_timer_seconds_bucket{le="1.0"} 1.0 (2)

application_alpha_timer_seconds_bucket{le="+Inf"} 2.0 (2) (3)

application_alpha_timer_seconds_count 2

application_alpha_timer_seconds_sum 6.0

application_alpha_timer_seconds{quantile="0.5"} 1.0

application_alpha_timer_seconds{quantile="0.7"} 5.0

application_alpha_timer_seconds{quantile="0.75"} 5.0

application_alpha_timer_seconds{quantile="0.8"} 5.0

| 1 | The Prometheus metric type is histogram. Both the quantiles/percentile and buckets are represented under this type. |

| 2 | The le tag represents less than and is for the defined buckets, which are converted to seconds. |

| 3 | Prometheus requires that a +Inf bucket counts all hits. |

Open Liberty InstantOn provides fast startup times for MicroProfile and Jakarta EE applications. With InstantOn, your applications can start in milliseconds, without compromising on throughput, memory, development-production parity, or Java language features. InstantOn uses the Checkpoint/Restore In Userspace (CRIU) feature of the Linux kernel to take a checkpoint of the JVM that can be restored later.

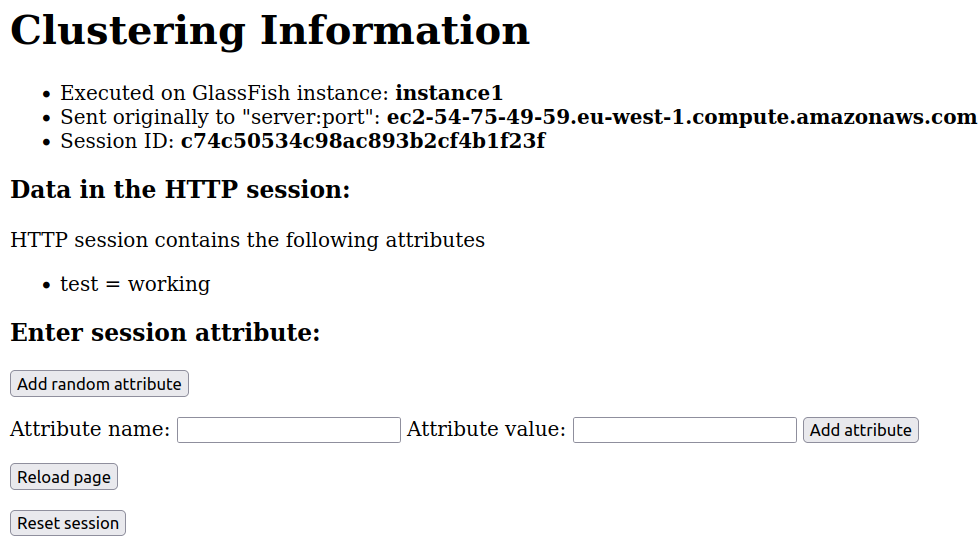

The 24.0.0.6-beta release provides InstantOn support for the JCache Session Persistence feature. This feature uses a JCache provider to create a distributed in-memory cache. Distributed session caching is achieved when the server is connected to at least one other server to form a cluster. Open Liberty servers can behave in the following ways in a cluster.

Client-server model: An Open Liberty server can act as the JCache client and connect to a dedicated JCache server.

Peer-to-Peer model: An Open Liberty server can connect with other Open Liberty servers that are also running with the JCache Session Persistence feature and configured to be part of the same cluster.

To enable JCache Session Persistence, the sessionCache-1.0 feature must be enabled in your server.xml file:

<feature>sessionCache-1.0</feature>You can configure the client/server model in the server.xml file, similar to the following example.

<library id="InfinispanLib">

<fileset dir="${shared.resource.dir}/infinispan" includes="*.jar"/>

</library>

<httpSessionCache cacheManagerRef="CacheManager"/>

<cacheManager id="CacheManager">

<properties

infinispan.client.hotrod.server_list="infinispan-server:11222"

infinispan.client.hotrod.auth_username="sampleUser"

infinispan.client.hotrod.auth_password="samplePassword"

infinispan.client.hotrod.auth_realm="default"

infinispan.client.hotrod.sasl_mechanism="PLAIN"

infinispan.client.hotrod.java_serial_whitelist=".*"

infinispan.client.hotrod.marshaller=

"org.infinispan.commons.marshall.JavaSerializationMarshaller"/>

<cachingProvider jCacheLibraryRef="InfinispanLib" />

</cacheManager>You can configure the peer-to-peer model in the server.xml file, similar to the following example.

<library id="JCacheLib">

<file name="${shared.resource.dir}/hazelcast/hazelcast.jar"/>

</library>

<httpSessionCache cacheManagerRef="CacheManager"/>

<cacheManager id="CacheManager" >

<cachingProvider jCacheLibraryRef="JCacheLib" />

</cacheManager>Note:

To provide InstantOn support for the peer-to-peer model by using Infinispan as a JCache Provider, you must use Infinispan 12 or later. You must also enable MicroProfile Reactive Streams 3.0 or later and MicroProfile Metrics 4.0 or later in the server.xml file, in addition to the JCache Session Persistence feature.

The environment can provide vendor-specific JCache configuration properties when the server is restored from the checkpoint. The following configuration uses server list, username, and password values as variables defined in the restore environment.

<httpSessionCache libraryRef="InfinispanLib">

<properties infinispan.client.hotrod.server_list="${INF_SERVERLIST}"/>

<properties infinispan.client.hotrod.auth_username="${INF_USERNAME}"/>

<properties infinispan.client.hotrod.auth_password="${INF_PASSWORD}"/>

<properties infinispan.client.hotrod.auth_realm="default"/>

<properties infinispan.client.hotrod.sasl_mechanism="PLAIN"/>

</httpSessionCache>To try out these features, update your build tools to pull the Open Liberty All Beta Features package instead of the main release. The beta works with Java SE 22, Java SE 21, Java SE 17, Java SE 11, and Java SE 8.

If you’re using Maven, you can install the All Beta Features package by using:

<plugin>

<groupId>io.openliberty.tools</groupId>

<artifactId>liberty-maven-plugin</artifactId>

<version>3.10.3</version>

<configuration>

<runtimeArtifact>

<groupId>io.openliberty.beta</groupId>

<artifactId>openliberty-runtime</artifactId>

<version>24.0.0.6-beta</version>

<type>zip</type>

</runtimeArtifact>

</configuration>

</plugin>You must also add dependencies to your pom.xml file for the beta version of the APIs that are associated with the beta features that you want to try. For example, the following block adds dependencies for two example beta APIs:

<dependency>

<groupId>org.example.spec</groupId>

<artifactId>exampleApi</artifactId>

<version>7.0</version>

<type>pom</type>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>example.platform</groupId>

<artifactId>example.example-api</artifactId>

<version>11.0.0</version>

<scope>provided</scope>

</dependency>Or for Gradle:

buildscript {

repositories {

mavenCentral()

}

dependencies {

classpath 'io.openliberty.tools:liberty-gradle-plugin:3.8.3'

}

}

apply plugin: 'liberty'

dependencies {

libertyRuntime group: 'io.openliberty.beta', name: 'openliberty-runtime', version: '[24.0.0.6-beta,)'

}Or if you’re using container images:

FROM icr.io/appcafe/open-liberty:betaOr take a look at our Downloads page.

If you’re using IntelliJ IDEA, Visual Studio Code or Eclipse IDE, you can also take advantage of our open source Liberty developer tools to enable effective development, testing, debugging, and application management all from within your IDE.

For more information on using a beta release, refer to the Installing Open Liberty beta releases documentation.

Let us know what you think on our mailing list. If you hit a problem, post a question on StackOverflow. If you hit a bug, please raise an issue.

In this article, you will learn about:

Initially, I anticipated the article would be short, but it ended up being quite lengthy. You could think of it as a missing chapter from the “Beginning Helidon” book I co-authored with my colleagues. Yes, it’s a bit cheesy to advertise the book in the first paragraph. You might assume that promoting the book was my sole motivation for writing this article. Admittedly, it’s a significant motivator, but not the only one.

In the Helidon support channels, we frequently encounter questions about migrating Spring Boot applications to Helidon. To address these inquiries, we concluded that creating a Helidon version of the well-known Spring Petclinic demo application and documenting the migration strategies and challenges would be the best approach. I volunteered for the task because of my previous experience with Spring programming and because I hadn’t engaged in real programming for quite some time, and I wanted to demonstrate that I still could. Whether I succeeded or not, you readers can decide after reviewing the work. Perhaps I shouldn’t have taken it on. You can find the result here. Anyway, enough philosophical musings; let’s get down to business.

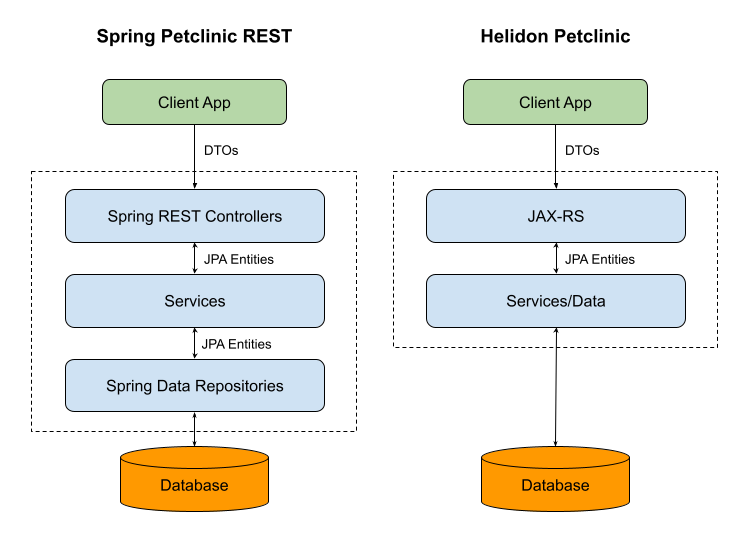

When I began my research, I started with the original Spring Petclinic. However, it seemed a bit outdated to me, so I explored other Petclinic forks available at https://github.com/spring-petclinic. One that caught my attention was the Spring Petclinic Rest, which functions as a RESTful service. Its architecture aligns well with the concepts of Helidon. Additionally, it features an Angular-based UI in a separate project. The plan was to develop a Helidon-based backend to complement the frontend project for demonstration purposes.

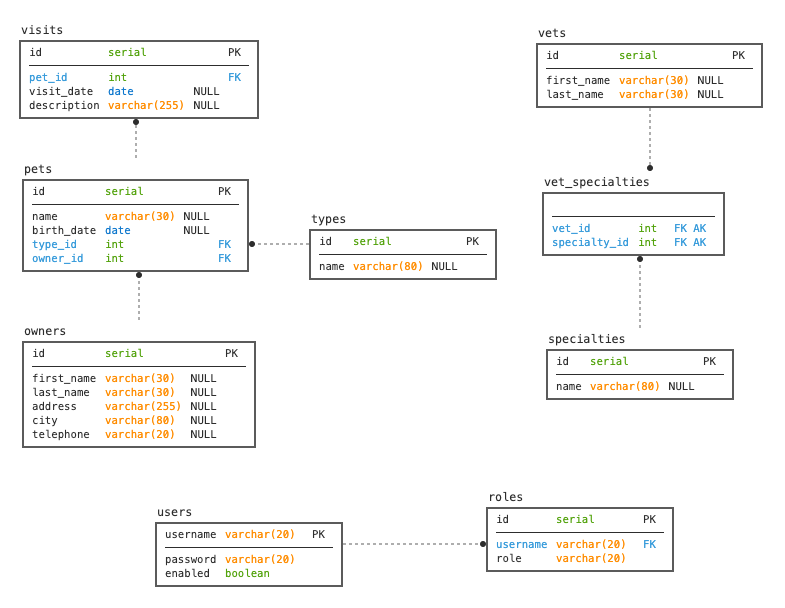

The Spring Petclinic Rest project is thoroughly documented in its README.md. For convenience, I’ll provide some basic design concepts here:

openapi-generator-maven-plugin.There are two data models:

And three layers:

Helidon Petclinic is a project I created. You can check it out here. The README.md contains information about how to build and run it.

My goal was to preserve the design and structure of the application as much as possible. In the end, I achieved a layer structure very similar to the original. The only significant change is an optimization related to the database layer. I perform database operations in the service layer, effectively integrating it with the database layer. The original application’s service layer methods typically involved only a single line call to the data repository, so this made sense to me. I draw a picture (see below) to help you understand the difference.

Spring and Helidon are different frameworks. Spring is built around the proprietary Spring Injection. Although it integrates with some open-source libraries and standards, most of its features are Spring-specific. On the other hand, Helidon (Helidon MP) is built on top of Enterprise Java standards such as Jakarta EE and MicroProfile, which technically makes it more open. There is some overlap in third-party libraries. Both Spring and Helidon use JPA, which will make our database layer migration easier. Additionally, Jackson and Mockito can be used in both frameworks. But I would recommend using Jakarta equivalents of Jackson for better Jakarta compatibility. In the table below, I have listed Spring components and their corresponding Helidon equivalents, which I’ll use in my project.

| Spring | Helidon |

|---|---|

| Spring Injection | Jakarta Contexts and Dependency Injection (CDI) |

| Spring REST Controller | Jakarta RESTful Web Services (JAX-RS) |

| Spring Data | Jakarta Persistence (JPA) |

| Spring Open API generator | Helidon Open API generator |

| Spring Tests Framework | Helidon Tests |

| Jackson | Jakarta JSON Processing (JSON-P) and Jakarta JSON Binding (JSON-B) |

| Spring Configuration | MicroProfile Config |

I had to make some compromises. For simplicity’s sake, I only added support for HSQL. I also omitted security support. Basic Authentication with passwords stored in the database is not what I would recommend using; it falls far short of modern security standards. I may consider supporting security in future versions of the project if there is demand for it.

The JPA model comprises a set of JPA entities. JPA is a standard, and one of the beauties of standards is that you don’t need to alter things when migrating code to another runtime. I made only minor adjustments to these classes, mainly replacing Spring-specific features with their Helidon equivalents. I replaced the usage of ToStringCreator in toString() methods with code generated by my IDE (IntelliJ Idea rules!). Additionally, I replaced instances of PropertiesComparator.sort() with Collections.sort().

Spring code:

public List<Pet> getPets() {

List<Pet> sortedPets = new ArrayList<>(getPetsInternal());

PropertyComparator.sort(sortedPets,

new MutableSortDefinition("name", true, true));

return Collections.unmodifiableList(sortedPets);

}

Helidon code:

public List<Pet> getPets() {

var sortedPets = new ArrayList<>(getPetsInternal());

sortedPets.sort(Comparator.comparing(Pet::getName));

return Collections.unmodifiableList(sortedPets);

}

Another change I made was adding named queries to serve as a replacement for the Spring Data repositories. This is what I’ll discuss in the next section.

Spring Data is a part of the Spring framework designed for working with databases. Spring Data implements the repository pattern, which involves defining interfaces containing methods to retrieve data (entities or collections of entities). The framework automatically generates the implementation of these interfaces. Operations on data and search criteria are defined by method names. For instance, the Collection<Pet> findAll() method retrieves all pets, while void save(Pet pet) updates the given Pet object in the database. This abstraction eliminates the need to understand the underlying query language. While some may find it convenient, I personally prefer working with SQL statements as they are more intuitive to me. I suppose I’m just too old-fashioned.

To do this the Helidon way, I used JPQL queries, which I find preferable. In the service layer, rather than calling methods from repositories, I utilize the pure JPA API to achieve the same results.

First step is injecting the entity manager into ClinicServiceImpl:

@PersistenceContext(unitName = "pu1")

private EntityManager entityManager;

The entity manager will handle all database operations, which were previously handled by the repositories.

Next, I went through all methods and replaced repository usage with equivalent code using the entity manager, following certain patterns.

For find* methods a named query in the corresponding entity class has to be created and executed in the body of a method.

public List<Pet> findAllPets() {

return entityManager.createNamedQuery("findAllPets",

Pet.class).getResultList();

}

@NamedQueries({

@NamedQuery(name = "findAllPets",

query = "SELECT p FROM Pet p")

})

public class Pet extends NamedEntity {

...

}

The ClinicService.save* methods combine ‘create’ and ‘update’ functionality. However, JPA provides separate methods for creating and updating entities. Therefore, I needed to separate these operations in the code, as shown in the sample below.

@Transactional

public void saveOwner(Owner owner) {

if (owner.isNew()) {

entityManager.persist(owner);

} else {

entityManager.merge(owner);

}

}

The replacement of delete methods is straightforward, but there is one pitfall that users need to understand and know how to avoid. Here is a sample of how it’s done for most entities:

@Transactional

public void deleteOwner(Owner owner) {

entityManager.remove(owner);

}

However, for some entities, it needs to be handled differently. There are cases where an entity is dependent and managed by its parent entity. Such a relationship exists between the Owner and Pet entities. Owner contains a set of Pet entities annotated with cascade = CascadeType.ALL.

public class Owner extends Person {

@OneToMany(cascade = CascadeType.ALL,

mappedBy = "owner",

fetch = FetchType.EAGER,

orphanRemoval = true)

private Set<Pet> pets;

...

}

In this case, to remove a pet, instead of invoking entityManager.remove(pet), you need to delete it from the Owner.pets set and call entityManager.merge(owner).

@Transactional

public void deletePet(Pet pet) {

var owner = pet.getOwner();

owner.deletePet(pet);

entityManager.merge(owner);

entityManager.flush();

}

This is a legitimate use case. You can read more about it here.

The handling of transactions differs between Helidon and Spring. Spring utilizes Spring Transactions, while Helidon uses Jakarta Transactions (JTA). However, these two APIs are similar. The project employs declarative transactions, defined by placing the @Transactional annotation on methods that need to be executed within a transaction. I only replaced the import from org.springframework.transaction.annotation.Transactional to jakarta.transaction.Transactional. Additionally, since JTA does not support read-only transactions, I removed the readOnly = true parameter from Spring’s @Transactional annotation when present. Furthermore, I optimized the code by removing transaction support from methods that do not require transactions, such as methods that only read data from the database without writing to it.

Migrating REST controllers to Helidon is one of the complicated tasks. Source code is different because Helidon uses Jakarta RESTful Web Services (JAX-RS) and Spring uses proprietary libraries. I had to rewrite all REST controllers manually. There are some patterns which can be used, but it’s not as obvious as it is with data repositories.

@RequestScoped (1)

public class PetResource implements PetService { (2)

@Context (3)

UriInfo uriInfo;

private final ClinicService clinicService;

private final PetMapper petMapper;

@Inject (4)

public PetResource(ClinicService clinicService,

PetMapper petMapper) {

this.clinicService = clinicService;

this.petMapper = petMapper;

}

@Override

public Response addPet(PetDto petDto) { (5)

var owner = clinicService.findOwnerById(

petDto.getOwnerId()).orElseThrow();

var pet = petMapper.toPet(petDto);

pet.setOwner(owner);

clinicService.savePet(pet);

var location = UriBuilder

.fromUri(uriInfo.getBaseUri())

.path("api/pets/{id}")

.build(pet.getId());

return Response.created(location)

.entity(petMapper.toPetDto(pet))

.build();

}

...

}

<1> – Makes this class a request scoped CDI bean

<2> – Implements PetService generated interface. This interface contains JAX-RS annotations defining paths, HTTP methods, etc.

<3> – Injecting UriInfo class usng JAX-RS @Context annotation

<4> – Constructor injection of ClinicService and PetMapper

<5> – JAX-RS method used to add a pet

Remember that this project is API first and REST resources and the model classes it operates are generated from the OpenAPI document. All Rest controllers implement these generated interfaces. And these interfaces are different for Spring and Helidon.

I’ll explain the OpenAPI plugin configuration in the next section.

To generate code out of OpenAPI document, I used OpenAPI plugin provided by Helidon. I couldn’t use a plugin used in the original project because it generates the Spring code, which is not compatible with Helidon. You can find the plugin configuration in the project pom.xml file. I won’t go deep into the configuration. The only thing I mention is an option to skip generating data model tests. I couldn’t find it in documentation. The option is <generateModelTests>false</generateModelTests>.

There are some differences in how these two generators work. First difference I noticed is handling read only fields. Spring generator doesn’t do anything and treats read only fields as all other fields. It means that they are mutable and not read only. Helidon generator behaves differently. It treats read only fields as immutable fields. The values are passed as the constructor parameters and there are no setters. Which makes them truly immutable. On the other hand, it adds some complications when migrating from the Spring approach. The major issue is that no-args constructor cannot be used anymore when converting entities to DTOs. I’ll explain how to deal with it in Mapstruct section below.

Another issue I faced was different handling of tags. Helidon plugin uses tags to group operations into services.

In the Open API document tags are defined like this:

tags:

- name: owner

description: Endpoints related to pet owners.

- name: pet

description: Endpoints related to pets.

...

If you look at the paths, there is addPetToOwner operation at /owners/{ownerId}/pets with pet tag. All other Owner-related operations are tagged with owner.

paths:

/owners/{ownerId}:

get:

tags:

- owner

operationId: getOwner

/owners/{ownerId}/pets:

post:

tags:

- pet

operationId: addPetToOwner

summary: Adds a pet to an owner

description: Records the details of a new pet.

As result, Helidon generator places addPetToOwner method in PetService and all other Owner related methods to OwnerService. It causes paths collision because the base path of OwnerService is /owner and it supposed to handle all sub-paths too. But there is /owners/{ownerId}/pets handler in PetService.

I fixed it by changing a tag in /owners/{ownerId}/pets path to owner.

Mapstruct is a library for generating converters between Java beans. It’s based on an annotation processor and does generation at build-time. It’s used in the project to generate mappers between entities and DTOs. It can be configured for usage in Spring projects as well as in CDI based Jakarta EE projects. I used the second option because Helidon project is a CDI based application. To do it add the following to Mapstruct Maven plugin configuration:

<compilerArgs>

<compilerArg>

-Amapstruct.defaultComponentModel=jakarta-cdi

</compilerArg>

...

</compilerArgs>

One of the issues I had to solve with Mapstruct is making it work with DTOs generated by Helidon Open API generator. I mentioned above that Helidon generator doesn’t generate setters for fields marked as read only in the OpenAPI document. It generates a constructor with parameters where initial values of all read only fields must be passed. It requires a special treatment using object factories in Mapstruct. Technically, it requires creation of a factory class which describes how objects are created using non-default constructor.

The sample below contains two methods annotated with @ObjectFactory annotation. These methods will be used to create objects specified as their return types: createVetDto creates VetDto, createOwnerDto creates OwnerDto. Constructor arguments are passed as method parameters.

@ApplicationScoped

public class DtoFactory {

@ObjectFactory

public VetDto createVetDto(Vet vet) {

return new VetDto(vet.getId());

}

@ObjectFactory

public OwnerDto createOwnerDto(Owner owner) {

return new OwnerDto(owner.getId(), new ArrayList<>());

}

...

}

Object factory must be specified in @Mapper annotation uses parameter of the interface which creates that particular object as it’s shown in a snippet below.

@Mapper(uses = {DtoFactory.class, SpecialtyMapper.class})

public interface VetMapper {

VetDto toVetDto(Vet vet);

...

}

The original projects was nicely covered with tests, so my task was to do the same for my port. I rewrote the original tests and added tests for mappers and integration tests. Tests, the same as REST controllers, need to be reworked. The rework is smaller for the service tests and much bigger for Rest controllers tests.

All service layer tests are located in io.helidon.samples.petclinic.service. My project supports only one database rather than the original project supporting three databases, which makes the task easier. The tests perform real database operations in database allowing us to test all aspects of database operations including JPQL queries and CRUD operations. The tests look very similar to the original project tests. I copy/pasted the most of the code. The difference is that Spring supports transactions rollback after test method execution if the test method annotated with @Transactional. It’s very convenient because the database always kept unchanged. Helidon doesn’t have this feature, but I managed to simulate it by starting a user transaction before each method call and rolling it back after it. I collected all ‘transactional’ tests in ClinicServiceTransactionalTest class. All other tests which don’t change the data are in ClinicServiceTest class.

In the original Rest controller tests, mocking is utilized. Spring provides a nice MockMVC testing framework, which is Spring-specific. Consequently, I opted for pure Mockito. Personally, I’m not a huge fan of mocking because sometimes tests using mocks end up testing the mocks themselves rather than the actual logic. However, this isn’t true for all cases; mock tests run faster and developers are accustomed to them.

The typical Helidon mocking test class is demonstrated in the code snippet below. I utilize the @HelidonTest annotation to initiate a CDI container, in conjunction with the Mockito extension @ExtendWith(MockitoExtension.class).

Bootstrapping Mockito is slightly tricky because my JAX-RS resource uses constructor injection, and I also need to mock the field-injected UriInfo class. Mockito poorly supports the use case when both constructor and field injection are used. Consequently, I had to manually create mocks for constructor-injected classes and use declarative mocking with the @Mock annotation for field-injected UriInfo.

Despite these challenges, the test methods look like typical Mockito tests.

@HelidonTest

@ExtendWith(MockitoExtension.class)

public class PetResourceTest {

ClinicService clinicService;

@Inject

PetMapper petMapper;

@Mock

UriInfo uriInfo;

@InjectMocks

PetResource petResource;

@BeforeEach

void setup() {

clinicService = Mockito.mock(ClinicService.class);

petResource = new PetResource(clinicService, petMapper);

MockitoAnnotations.openMocks(this);

}

@Test

void testAddPet() {

var petDto = createPetDto();

var owner = createOwner();

Mockito.when(uriInfo.getBaseUri())

.thenReturn(URI.create("http://localhost:9966/petclinic"));

Mockito.when(clinicService.findOwnerById(1))

.thenReturn(Optional.of(owner));

var response = petResource.addPet(petDto);

assertThat(response.getStatus(), is(201));

assertThat(response.getLocation().toString(),

equalTo("http://localhost:9966/petclinic/api/pets/1"));

var pet = (PetDto) response.getEntity();

assertThat(pet.getId(), equalTo(petDto.getId()));

assertThat(pet.getName(), equalTo(petDto.getName()));

assertThat(pet.getOwnerId(), equalTo(petDto.getOwnerId()));

}

...

}

I’ve decided to include integration tests in the project. You can find them in the io.helidon.samples.petclinic.integration package. Ultimately, it’s the most robust way to test all application layers. I’ve utilized the Maven Failsafe plugin to execute the integration tests. Below is the failsafe plugin configuration:

<plugin>

<artifactId>maven-failsafe-plugin</artifactId>

<version>3.2.5</version>

<executions>

<execution>

<goals>

<goal>integration-test</goal>

<goal>verify</goal>

</goals>

</execution>

</executions>

<configuration>

<classesDirectory>${project.build.directory}/classes</classesDirectory>

</configuration>

</plugin>

Please pay attention to the <classesDirectory>${project.build.directory}/classes</classesDirectory> configuration option. The plugin won’t function properly without it.

The typical integration test is illustrated in the snippet below. I’m using the @HelidonTest annotation to initiate a CDI container and the Helidon web server, and I’m injecting a web target pointing to the running web server. This is a feature of the Helidon testing framework. In the test methods, I call a REST endpoint, retrieve the result, and verify its correctness. This tests the entire chain of layers from the REST resource to the database.

@HelidonTest

class PetResourceIT {

@Inject

private WebTarget target;

@Test

void testListPets() {

var pets = target

.path("/petclinic/api/pets")

.request()

.get(JsonArray.class);

assertThat(pets.size(), greaterThan(0));

assertThat(pets.getJsonObject(0).getInt("id"),

is(1));

assertThat(pets.getJsonObject(0).getString("name"),

equalTo("Jacka"));

}

...

}

You can execute all integration tests using the mvn integration-test command.

I composed my summary as a small FAQ.

Is it possible to migrate a Spring project to Helidon?

Definitely yes.

Is it difficult?

Typically no, but it depends on the project. I spent about a day to migrate database and service layer, about 2 days to migrate REST controllers, about a week to migrate tests, and more than a week to write this article. At the end, testing and documenting work is more time-consuming than developing.

Is Helidon different from Spring?

Yes it is, but there are the same or similar components/frameworks/specs used in both, so if you know Spring, Helidon doesn’t look as an alien and visa versa.

What are the advantages of Helidon?

Helidon is based on Java 21 Virtual Threads and is very fast. It supports Jakarta EE and MicroProfile, so it’s a great choice if you are standards-minded.

Where I can find additional information about Helidon?

https://helidon.io and https://medium.com/helidon

If you’re thinking, “Hey, Dmitry! This is a brilliant article, I enjoyed reading it!” you can share a link to this article on social networks as an act of appreciation.

Thank you!

With the release of Jetty 12.0.8, we’re excited to announce the (re)implementation of a somewhat maligned and deprecated feature: Cross-Context Dispatch. This feature, while having been part of the Servlet specification for many years, has seen varied levels of use and support. Its re-introduction in Jetty 12.0.8, however, marks a significant step forward in our commitment to supporting the diverse needs of our users, especially those with complex legacy and modern web applications.

Cross-Context Dispatch allows a web application to forward requests to or include responses from another web application within the same Jetty server. Although it has been available as part of the Servlet specification for an extended period, it was deemed optional with Servlet 6.0 of EE10, reflecting its status as a somewhat niche feature.

Initially, Jetty 12 moved away from supporting Cross-Context Dispatch, driven by a desire to simplify the server architecture amidst substantial changes, including support for multiple environments (EE8, EE9, and EE10). These updates mean Jetty can now deploy web applications using either the javax namespace (EE8) or the jakarta namespace (EE9 and EE10), all using the latest optimized jetty core implementations of HTTP: v1, v2 or v3.

The decision to reintegrate Cross-Context Dispatch in Jetty 12.0.8 was influenced significantly by the needs of our commercial clients, some who still leveraging this feature in their legacy applications. Our commitment to supporting our clients’ requirements, including the need to maintain and extend legacy systems, remains a top priority.

One of the standout features of the newly implemented Cross-Context Dispatch is its ability to bridge applications across different environments. This means a web application based on the javax namespace (EE8) can now dispatch requests to, or include responses from, a web application based on the jakarta namespace (EE9 or EE10). This functionality opens up new pathways for integrating legacy applications with newer, modern systems.

The reintroduction of Cross-Context Dispatch in Jetty 12.0.8 is more than just a nod to legacy systems; it can be used as a bridge to the future of Java web development. By allowing for seamless interactions between applications across different Servlet environments, Jetty-12 opens the possibility of incremental migration away from legacy web applications.

This tutorial will show how to build a powerful combination: a React frontend that seamlessly interacts with data from a Jakarta EE REST backend application running as a WildFly bootable JAR. Prerequisites: Step # 1 Define the Rest Endpoint Firstly, bootstrap a Jakarta EE application. You can either use WildFly archetypes ( see Maven archetype ... Read more

The post Consuming Jakarta EE REST Services with React appeared first on Mastertheboss.

In this article, we will learn how to debug a Quarkus application using two popular Development Environments such as IntelliJ Idea and VS Studio. We’ll explore how these IDEs can empower you to effectively identify, understand, and resolve issues within your Quarkus projects. Enabling Debugging in Quarkus When running in development mode, Quarkus, by default, ... Read more

The post How to debug Quarkus applications appeared first on Mastertheboss.

In this article we will learn some of the available tools or plugins you can use to migrate your legacy Java EE/Jakarta EE 8 applications to the newer Jakarta EE environment. We will also discuss the common challenges that you can solve by using tools rather than performing a manual migration of your projects. Challenges ... Read more

The post Simplifying migration to Jakarta EE with tools appeared first on Mastertheboss.

Whether you’re a beginner exploring OpenShift for the first time or an experienced user looking for quick references, this cheat sheet is designed to provide you with a CheatSheet of OpenShift commands, concepts, and best practices. From managing pods and services to setting up routes and exploring advanced deployment strategies, we’ve got you covered. Login ... Read more

The post Openshift Cheatsheet for DevOps appeared first on Mastertheboss.

Jakarta Data API is a powerful specification that simplifies data access across a variety of database types, including relational and NoSQL databases. In this article you will learn how to leverage this programming model which will be part of Jakarta 11 bundle. Overview of Jakarta Data API Jakarta.Data API: Offers a higher-level abstraction for data ... Read more

The post Getting Started with Jakarta Data API appeared first on Mastertheboss.

This article will teach you how to use a plain Datasource resource in a Quarkus application. We will create a simple REST Endpoint and inject a Datasource in it to extract the java.sql.Connection object. We will also learn how to configure the Datasource pool size. Quarkus Connection Pool Quarkus uses Agroal as connection pool implementation ... Read more

The post How to use a Datasource in Quarkus appeared first on Mastertheboss.

Docker-compose allows you to access container ports in two different ways: using “ports” and “expose:”. In this tutorial we will learn what is the difference between “ports” and “expose:” providing clear examples. Before diving into the differences between “ports” and “expose”, we recommend checking this article if you are new to Docker-Compose: Orchestrate containers using ... Read more

The post Docker-compose: ports vs expose explained appeared first on Mastertheboss.

This article will detail how to get started quickly with Jakarta EE which is the new reference specification for Java Enterprise API. As most of you probably know, the Java EE moved from Oracle to the Eclipse Foundation under the Eclipse Enterprise for Java (EE4J) project. There are already a list of application servers which ... Read more

The post Getting started with Jakarta EE appeared first on Mastertheboss.

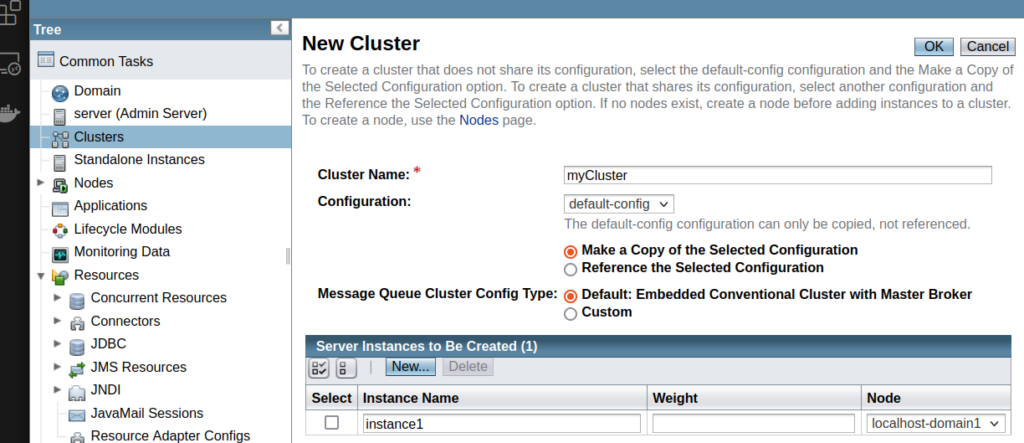

Setting up a GlassFish server cluster with an Apache HTTP server as a load balancer involves several steps: